With the growing use of the cloud, there is a growing need to de-risk cloud budgets and optimize performance. In fact, a new discipline – FinOps – has been coined to address the inter-departmental complexity of managing the cloud. Achieving success with cloud cost management requires a 360-degree view of relevant data that is not supported by cloud native and third-party reporting tools. Cloud and enterprise data need to be synthesized to provide insights about cost allocation, spending forecasts, consumption, provisioning, etc.

In this on-demand webinar, learn how your organization can get the rich insights needed to control cloud costs. We shine a light on exactly why cloud cost management is a challenge and demo Envisor Cloud Analytics, our out-of-the box BI solution that brings much needed agility to cloud cost management.

We show how you can

- Easily access data from multiple cloud and enterprise sources.

- Get near real-time insights using Power BI.

- Report on KPIs to actively manage, allocate and forecast cloud spend.

-

Leverage our pre-built data warehouse and reports to jumpstart robust cloud cost analytics.

Presenter

Keith Knowles

Senturus, Inc.

The Envisor Managing Director and Senturus FinOps Practice Lead, Keith Knowles is a FinOps Certified Practitioner with a proven track record in product development. An accomplished sales and marketing leader, he has held various positions with companies of all sizes, including Accenture, DayNine, Prism HR and Hewlett-Packard. During Keith’s time at Amdocs and Hewlett-Packard, he drove and managed strategic alliances valued at over $100 million.

Read moreMachine transcript

Welcome to today’s Senturus, webinar on Agile Analytics for Cloud Cost Management.

0:12

Happy to have everybody here today, but we’re going to do just a little bit of housekeeping upfront, and then we’ll lead into the presentation.

0:19

So, first up, on your GoToWebinar control panel, you’ll see you have a questions pane there. If you do have any questions that come up during the presentation, go ahead and type those into the questions panel.

0:31

We may answer some of those during the webinar, but there will also be a Q&A at the end, So you can put your questions there, and we will answer them either in real time or at the end of today’s presentation.

0:45

One of the first questions we usually get is, Can I get a copy of the presentation? And the answer to that is yes.

0:52

If you look in the chat window in your GoToWebinar Control panel, you will find a link to the presentation deck, so you can go ahead and click through that to pick up a copy of today’s day.

1:06

A quick overview of today’s agenda.

1:09

We’ll start with some introductions, talk a bit about growth of the cloud economy, and challenges with optimization, deleted the necessity of some tooling to make it easier to optimize your cloud spend. And we’ll talk about building your own and also do a demo of an Envisor Cloud Analytics. And again, we’ll wrap up with some Q&A.

1:31

So in terms of introductions for today, we’re happy to have Keith Knowles. He has a proven track record in product development, and he is our Envisor Managing Director.

1:45

He’s held various positions with companies of all sizes ranging from Accenture to HP.

1:54

And during his time at Amdocs and HP, Keith drove and manage strategic alliances that were valued at over $100 million.

2:02

And I’m Steve Reed Pittman, as usual here to view the bookends for today’s webinar.

2:07

But Keith will really be running the show and will be our main presenter. So Keith, welcome. Thank you for being here today.

2:17

So before I turn it over to Keith, we’re going to do a quick poll. Let me open this up.

2:27

Poll here is do you have an individual or a team that’s responsible for cloud optimization in your organization?

2:36

The answers are, do you have just an individual who handles cloud optimization And you have a smallish team largish team or no one at all or you may not be sure.

2:47

Go ahead and punch in your answers there, whether you have an individual or a team that’s responsible for cloud optimization.

3:10

We do find that with a lot of organizations, managing cloud spend is kind of a new territory, so you may or may not have somebody dedicated to that, or a group that’s part of their role.

3:25

So the answers are coming in looks pretty evenly split. So far.

3:30

We’ve got about a quarter of you, have just one person a quarter have a smallish team, quarter largish team, and a quarter of you don’t know. It’s really pretty evenly right now.

3:43

I’m going to leave it open here maybe for another 10 seconds or so.

4:02

So again, it shifted a little bit here towards the end, but it’s pretty close to evenly split across.

4:10

People are dealing with cloud optimization in your organizations.

4:17

I’m going to hand it over to Keith for the presentation. So, Keith, take it away.

4:25

Thanks, Steve. And that’s really interesting.

4:27

I mean, it, Dave, The growth, the first slide here is really all about the rise of cloud economy into growth there, and it’s just taken off, as I think everybody is aware.

4:38

If you’ve been listening to any of the earnings announcements, cloud spend is expected to decrease a little bit when you hear the forecast from Microsoft and AWS. But meanwhile, they’re kind of calming things down a little bit. And there’s a bunch of new entrants that are coming in as alternatives to AWS and Google.

4:58

And Microsoft as far as where you want to host your cloud. So, it’s going to be interesting to see what happens the next few years, but the one thing for sure, that the fact that this poll showing just about everybody has some sort of person or team that’s responsible for managing cloud spend. It’s a big part of most organizations. And we’ve seen that. And in the interest in that, and you’re going to see a few more slides here as we get further down through this presentation, that really talk about the number of tools and different types of options for managing cloud spend. But bottom line, it’s a big deal, for most organizations. We’ve seen cloud spend go to an industry in a really short time. Cloud spend is going to be about $500 billion this year. And next year, probably, I would bet we’ll still see it come close that, $600 billion. It’s just staggering. And that growth rate in the in the cloud has been about 25%.

5:56

These numbers are focused around hosting organizations’ rights to the AWS, Microsoft Azure Google Cloud platform, and others that are providing such services just really staggering 25% compound annual growth rate, which we haven’t seen at that scale in a very long time. Most companies are seeing cloud spend increase and move towards it.

6:27

As part of this, we’ve also seen a shift in the way business has been done as we’ve moved to the cloud, the way we procure and deploy resources has dramatically changed, right?

6:37

If you step way back in time. Server used to be acquired as big planning process, a lot of work went into what I would consider more or less a swag trying to figure out exactly what size machine we need for the next year. A lot of assumptions were made. One is that we called it a science, but in the end, it was a wild guess, we bought the servers, deployed them, they went in the data warehouse or data centers someplace, Things have changed dramatically.

7:55

Every engineer in an organization can spin up servers that the spend is variable. Whereas before you supply that server, you put it away in your closet. You put your in your data center, or co-location.

8:16

It’s very quick, and it’s variable costs. So it causes people in finance and procurement to, it really strikes fear into the dry itself. And the aspect, other aspect about this, I mean, it’s fantastic that it’s scalable, Right? So you can really turn things up very quickly.

8:34

But conversely, if you’re in a scenario where, you know, I’m watching a new application.

8:41

I want to make sure that my end users are satisfied. Want to make sure that they have very fast response times.

8:49

I very quickly just go ahead and launch a large server, and I really feel disconnected from most part, from the actual cost of that. So it’s very easy for cloud costs to get out of control. Right, because I know if I’m an engineer and DevOps or somebody that I’m launching a new server, I don’t want complaints. So, I’m going to go ahead and launch a server that I’m comfortably sizing. It has enough headspace that it’s, there’s not going to be customer complaints again.

In most organizations people are disconnected from their costs, and as a result, a lot of organizations will just overshoot their spend. So, they’ll have a budget and place. It could be $100 million. And next thing you know, it’s coming in significantly over that.

9:33

And we’ve heard from prospects and clients, and folks, and we’re at a trade show this year, and somebody came up to said we’ve moved the cloud, we got used to a six figure surprise every once in a while. And that’s what we’re here to talk about today, is how do we help better manage that.

9:53

We’re not necessarily going to address the surprise cloud spend, although we do have products that do that. The core issue here is that people need to have some governance in place to manage these things, otherwise you’re going to end up with surprises. They’re going to end up, just overshooting their cloud spend and not by trivial amount.

10:22

I mentioned that we tend to the trade show earlier this year, called Fin Objects, Senturus and Envisor. We are big supporters and believers in FinOps foundation. We do sponsor them, and we leveraged the framework.

10:38

The framework is about how to manage cloud spend. I should say how to optimize business value from your cloud because it’s really about getting the most value out of your cloud.

10:48

Not necessarily just cutting costs, but it’s about delivering good performance for the users, as well as maximizing the value so you’re lowering costs. FinOps does provide a framework, As I mentioned, they have a couple of things that I think are pretty interesting here. I’m going to start on the right side of this for a moment.

11:07

But, pretty standard, kind of, QA, process improvement types circle, circle of life, so to speak, where you move from, inform, optimize, operate. Where you’re trying to understand your costs. You’re trying to allocate those costs backup business, so people are aware of what they’re actually spending.

So, an engineer will feel more connected to the spend, as opposed to just clicking away and launching a server, not really understanding what the implications that might be to the company. To optimize to really figure out and how do we both and make sure that our servers are right sized? We also find a lot of people will spin things up that they’re no longer using. So you’ll find things like unattached storage. You’ll find servers that are idle most of the time or idle all the time. That could either be turned off completely or turn down at certain hours a day. And then, also rates.

12:06

So, we’re going to talk quite a bit about rate optimization and then operate phase, but this is a continuous loop. So you’re constantly going through these phases. And you may have different parts of your business in different parts of phases at different time. But you’re always going to be going forward and looking at this and reviewing things, whether it’s on a monthly basis or weekly basis depending on the size of your cloud spend and what the relevancy is. If you’re a large cloud spend, you’re probably doing it on a continuous basis or near continuous basis.

12:37

If your cloud spends a relatively small, it may be quarterly or monthly is adequate, but it depends on your organization and your environment.

12:46

Stepping back a little bit to the domains, and again, this is where we’re going to drill into a couple of key areas, and specifically in the areas around understanding your cloud costs, your usage. We’ll talk a fair amount about the rate optimization in cloud use optimization today.

We also support the other functional areas, performance, tracking benchmarking, real-time decision making. We have anomaly detection with our cloud controlled product, organizational alignment. Our dashboard plays right into helping the larger organization understand what they were spending and how well they are optimized. Our focus today will be understanding your cloud cost usage rate, optimization, and optimizing usage.

13:37

So, moving along, OK, so this is also leveraged from the fin Ops organization, where they did a survey this past year to try to understand what the top challenges of companies are that are embracing cloud optimization, and really trying to understand that. The number one key thing here is getting engineers to take action, It’s not because engineers don’t want to take action. It’s because they’re often not provided with the information. So as we talk about this concept of being able to bring the information back out, help make the engineer’s feel more connected to the cost, and be aware of what your spend is, what we find. What we hear is a lot of times the engineers aren’t even aware, they are completely disconnected from what that spend is.

14:30

Next, bridge the gap between where a finance role and the engineering role in coming up with some common language. So, one of the things that we’ll talk about as we get into this is the ability to make rightsizing recommendations. if you have a centralized team, which many of you clearly do, about 75% of you clearly have a central team that’s focused on this. What we see is often a finance type person has a strong technical bend to them. It could be in there. They’re trying to make recommendations. They need the data.

15:09

That gives them enough detail to be able to go back to an engineer and say, Hey, here’s a right sizing opportunity for you.

15:18

And also, the more accurate that recommendation is, the better the response is typically from that engineer. So we try to work to bring that data together. That works for both teams. That helps everybody work together as a team.

Getting the right information to the right people Is a big part of what we’re trying to do here, so why do you even need some tooling, right?

15:59

If you’ve seen the invoices that come from Amazon, you get a line item. I have my spend of $42,000 for the last month.

16:14

From the bill I can see a list of the servers or the number of them that I had spun up. But I will not get any utilization data from that.

16:28

So, you get to the next level of detail, But it’s going to be very high level, and I can’t tell who’s using any of it from this perspective, right? So, I get a number that goes to somebody in finance or procurement, and they’re going to look at this and just say, Well, that’s a big number.

16:47

Is it good? Bad? Maybe it’s you don’t know from this level of detail.

16:52

So the next level of detail is getting into what AWS calls it, cost and usage report.

17:03

Azure has something similar. Google, GCP has something similar. So this is a sample record. You’re going to get one per hour per resource, and this is going to have the billing detail and it’s going to tell you a lot of the utilization stuff.

17:27

As the FinOps Foundation would say, they’re not human readable. You look at this, it’s this big CSV file. If you look at it’s one record, it’s 744 records per month. Trying to put that into an Excel file becomes very challenging. very quickly. So if you have 500 cloud resources, and just speaking for our company, we’ve got about 150 cloud resources, they add up quickly. So 500 cloud resources equal to over a quarter million records, if you’ve got 2000 cloud resources, you’re going to have 1500 records or 1.5 five million records.

18:05

Excel tops out at about a million records that it can brand, then you still got to parse it, you got a figure it out, Graham, you can make macros and try to make that quicker. But it’s not an easy thing to decipher without some tooling folks who tried to do this in Excel, but quickly get frustrated. And so they start looking for tooling. And, as a result, there’s quite a few companies out there that have gotten into this. And we’re actuallygoing to jump into another poll here.

18:39

So, poll number two, and I’m going to let Steve run the poll here for me.

18:47

We’ll go ahead and kick that off. Thanks, Keith. So Keith is going to head into some discussion of some of the options that cloud providers offer. So, our next poll is about whether you currently use reservations codes or savings on your cloud, or clouds of choice, as you use any of those? to help lower your cloud spend on? On resources that are used regular basis? Go ahead, type here, or click your answers into the poll window there.

19:19

It looks like about half of you have already punch in your info, and pretty interesting what I’m seeing so far, as I get it pretty even distribution across these things. For those of you who do know, it looks like about half of you don’t know whether your organization uses any of those options.

19:54

I’m going to go ahead and close it out, share the results with everybody.

20:03

So, it shifted a little bit in the last little bit of the poll, but it does look like about half of you are using some sort of savings option from the cloud providers.

20:21

So, I’m going to go ahead and hide these results, and hand it back to you, Keith, from here.

20:29

Thank you, Steve. And that’s interesting, right. So, there’s some familiarity with folks on the call with these programs. They’re pretty powerful, right? So we’re not going to go into how to. But I do want to just broached the topic of why reservations are important. Especially if you’re not using them today. If you take, this a single AWS’s actual pricing I pulled last week it’s a T4G extra large server from Amazon. If you look at the monthly cost, that’s $116 per month if I’m doing on demand. So, if I’m doing an on-demand span, I’m going to pay about $120 a month for this thing. My total cost for the year is going to be $1400.

21:26

It’s for an on-demand instance. And that’s assuming it’s running all the time. I’m not doing any scaling on this. So one server is $120 per month. If I make a one-year commitment.

21:41

And this is best case scenario from a reservation perspective.

21:46

If I make a one-year upfront commitment and payment, I cut that cost down to $68 and 67%.

21:54

I’ve got a 41% savings on that, just by telling Amazon that I want to use that server for a year. And this is prepaid if I don’t do a pre pay on this, and I go month to month, but do commit to that one year agreement, it’s still going to be about a 37% savings. You save a lot of money just by making that commitment.

22:17

And if I upped a little bit more and go to a three year over 62% savings. So, it cuts my monthly costs on that to $43 and 89%, And, again, same thing. This is a one year prepay. If I were to do just a monthly pay by the three or commit.

22:33

I’m probably looking at about a 58% saving off that list price, so reservations are really an important part of saving money with the cloud, right? So I actually should say we’re reservations and savings programs, because there’s, the standard reservations were born, the first thing AWS is recently, about two years ago introduced savings programs or savings plans, I should say a little bit more flexibility, in some ways, little less than other ways.

23:02

Azure is about to do same thing. So having a team that can manage and apply leveraged reservations really important. It’s always a big part of this is getting financed, understand what you’re doing when you’re making these commitments. because a lot of people say, you know what? We went to the cloud, because it’s elastic.

23:17

I can turn it on, turn off scale and save money because I turn things off from amazing.

23:23

Well, the reality is there’s a lot servers that you cannot necessarily scale down in real time. Or you can’t even turn them off. You’re not going to turn off your SOP instance as an example at nighttime when nobody’s using it. You’re going to leave it running. So reservations, savings plans, all of these things are very important, we do support those. And we’ll talk a little bit more about those in a moment.

23:45

But I’m going to shift a little bit, You know, with all this, you would think that there be plenty of options from a tooling perspective, right? So I need to hang. Hopefully, everybody kind of fuels that, based on what we’ve shared so far, that this is an important topic that you do need to make some investment into to manage your Cloud Spanner. And there’s a ton of potential solutions out there.

24:06

And then you would think that there’d be a Goldilocks, but the reality is a lot of companies don’t necessarily find success with just one tool.

24:23

So a lot of respondents to this fill ups survey is the average was 3.7 tools or needs to do the job, and the probably the most interesting thing that I find from, this is the number one set of tools that people are using. They’re not third party tools.

24:38

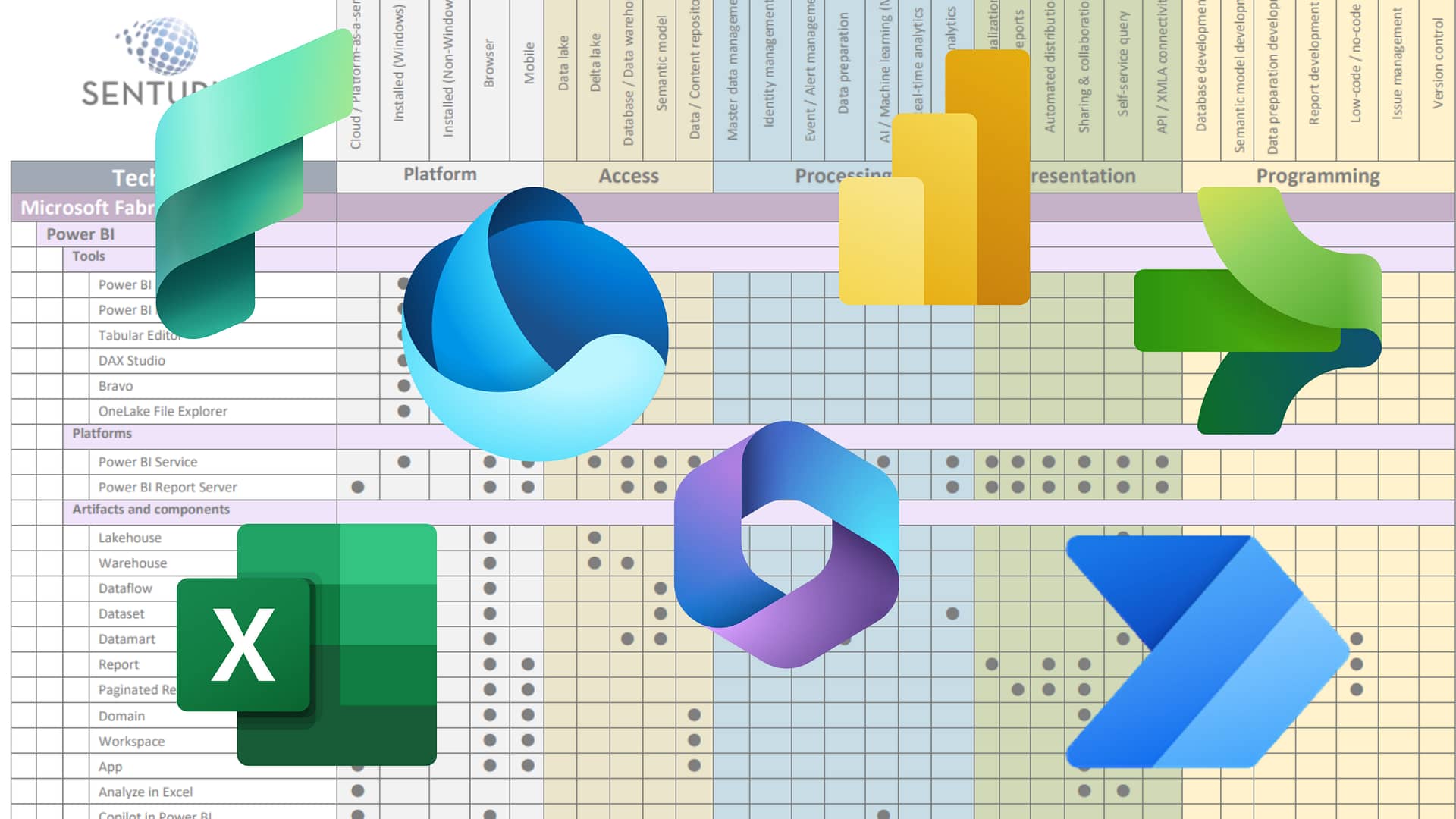

They are the core tools that are provided by the software or by the cloud platforms themselves, so AWS Cost Explorer, Azure Cost Management Tool, and Google Cloud’s version of those costs tools. Interesting thing is, when you look at the next set of what people are doing, is, they’re building their own. So they’re, building around Power BI dashboards. They’re building their own Google Cloud Platform dashboards, or they’re building a home-grown tool, potentially in conjunction with that. So, it’s really interesting. So, if you ask yourself, so why? Why is this?

25:18

So, why aren’t they, they leveraging off the shelf solution.

25:22

And what we find is that it’s because they’re flexible, right? So, these tools have rigid report structures.

25:28

Folks want to be able to see the data in their own reporting structure, right? So if you have a larger company that is as multiple divisions, they want to split that up by division. They want to see with their own chart Of Account Imbedded. They want to use your own structure. They want to see. They want to see the data in a format that’s consistent with the other reports within the environment. So the flexibility is really important for them being able, and also being able to use a tool, or choice to create reports. Right, so I’ve got to the next slide. I’ll talk a little bit more about that.

26:02

Being able to use Power BI as an example and be able to very quickly create reports. Not just download to spreadsheet and do this every month, but create a report in Power BI that I can then share I can use over and over again. I no longer have to do that in an extra step of exporting out of one of these other tools that I just showed the longest line on and reshaping and refactoring that data. I can do that all in Power BI. If I build my own tool in and use that data warehouse or the day is extensible writes, a lot of folks want to incorporate their own enterprise data.

26:36

And we have a lot of people out there that are doing things slightly different than the norm, right. So, being able to innovate and go beyond what these out of box solutions offer? So having that flexibility is really pretty important to people, for all the reasons above, and if hearing it from me is not enough, here’s some quotes from some folks that we’ve talked to, right?

26:59

So, You see, these are some big companies, right, that are basically out there looking for something that’s more powerful Looking for something that brings in their own data, looking for something that allows them to define their own metrics, right? So these things are all stuff that our clients and prospects are out there looking to do, So this is not me talking about it. This is, these are, again, actual quotes from folks that have been like, how do I, bring in external data and then understand something such as the cost to serve one of my customers? Right? So you, you see a theme here with Elastic quotes, about bring in my own data, to be able to understand, what’s the cost to serve? Somebody comes to my website as an example. What’s the cost to provide an order process in order on my website?

27:49

What’s my cost to serve an incremental customer are all things that are really important for folks to be able to do and answer, and that’s really kind of the holy grail of where cloud cost optimization goes, It’s, about understanding the business value and impact and then all ultimately, if I can understand the cost to serve my organization. Fine grain, right. I want to be able to understand the cost to serve, potentially, a client, or marketing. Or the marginal cost of adding one additional user on my website, all these things, if I understand that.

28:28

I can then, very easily, when it comes time, at the end of the year, from a public company, I need to forecast you from a private need to do a forecast.

28:36

I can very easily use my sales forecast.

28:40

I’m expecting to add 10%, 20%, hopefully 25%. New customers. I can multiply that out by these, various internal costs, and then I can figure out what my cloud spend for next year is going to be very quickly and accurately.

28:55

That’s a very high level example, But it, you can drill down very deep into this, if you’re getting to those unit economics and metrics, being able to understand the cost to serve your various customers, both internal and external, right? So, we also hear people wanting to and measuring the cost of their engineering teams and benchmarking internally and understand that.

29:17

So, having your own data, being able to see it the way you want is really powerful. Now, just furthering on this again. So, so this is a couple of people, but who’s actually doing this in practice, right? So, who’s actually going out there and building out, building their own solutions.

29:35

Lot, a lot of the who’s who of the world, right? So a couple of innovators here. Netflix, highly customized business. But in reality, when you look at the rest of these folks, they’re all pretty much companies that you’d know you’d expect an off the shelf solution would support, right? But most all of these have built Around, or are in process of building around, so, it’s a big deal, It’s, really important.

30:00

So to have that, flexibility, to be able to bring in additional Enterprise data and use that in your calculations, so that’s where having your own data warehouse makes a lot of sense. And, really brings life to the numbers for folks, when they can then see it.

30:15

When you can go back to that engineer, who’s responsible for provisioning that server, potentially, but not necessarily cost and be able to share with them that, hey, listen, here’s the cost to serve A client, and we’d like, you know, we’d like to figure out how to cut that down.

30:30

So, it’s really important to be able to, bring in those additional data points from within your company to be able to make the data meaningful and bring it to life to people beyond that, that core FinOps team that you may have, OK, so, Challenge it, right?

30:48

So, as we get to this point in time, you want to build around this, challenge this, right? So, these are the common things. When you’re building out a solution is really, you know, how do I fund this internally? when I’ve got folks that are competing for it and my manager says, you know what, you want, just, by an off the shelf solution. Well, it’s always a challenge here, right? so lacking the resources time to build it. There’s no need to keep up with the with the cloud. Is a constantly changing. Ingesting and normalizing data is something we haven’t really talked much about, right? So, one of the things that, that we bring value to it in and also you’d want to do in your own data warehouse. Is if your multi cloud, you’re going to want to be able to bring all that data in your system.

31:33

And normalize it, so that if I’m looking at data from AWS and looking at data from Azure and Google, and whomever else I may have.

31:40

In my platform, I want to be able to have everything normalized.

31:44

So, if I look at a reservation from one and grab, they all will have slightly different nuances to it, but I want to understand what the how that works. I want to be able to see that all in one area.

31:54

I want to be able to understand, am I optimizing my optimize across all of my clouds, and being able to potentially, even benchmark across those providers to understand, is one performing better than the other?

32:06

And maybe I need to shift from cloud, A cloud provider, A cloud provider B, or C, etc right? Then there’s always time to market. So, trying to get something spun up quickly is always important, right? So? So, hey, I need to spend the next 6 to 9 months to build a data warehouse.

32:25

To do my cloud optimization may not be a positive selling point internally.

32:33

Of course, We can help with that, right?

32:36

So, that’s always something we can talk about. Envisor Cloud Analytics is our product. It’s an out of box data warehouse for cloud optimization. We do multi cloud data sources. Something a little bit unique about our solution. We are pulling in data in five minute intervals. So, we do have very fine grain data. We, also bring in cost and performance data. If you look back, and I’m not going to go back to that, but that the curve file, when you look at that, it give you some peak data, but it’s not going to give you enough data to be able to make a recommendation to an engineer, to resize a system. So, we bring in both that cost data and fine grained performance data. So, you can make decisions to make recommendations, and decisions, that help decide whether to resize or right size this system, or the other. We really jumpstart your organization.

33:42

Our solution allows you to extend and integrate your own data sources. We’re probably going to have another webinar that talks more about how you would do that. Today, I’m going to share a little bit more about what we have available in it, and we’re constantly building out, but I’m going to tell you more about what we have in the solution as opposed to necessarily showing you that extensibility, but it is there.

34:03

If you’re familiar with Power BI, data warehouse, you’re very familiar with how easy that is to do, to create your own visualizations.

34:10

Linked together numerous data stores to be able to come up with these, custom cuts at data and sharing that for your own internal. I’m going to give you a quick product demo.

34:48

OK, so this is our main dashboard when you log into our product.

34:52

This is Power BI, if you’re not familiar with Power BI, this is a Power BI interfaces. The web version of Power BI, by the way, and there is a desktop version. You can customize the reports for how you want them, but we add to them, We give a number of out of box reports to help you very quickly understand the overall health, your cloud, and that spend. And we also provide that performance side of things. So I have the ability to go in here and look at a couple of key things. So my overall spend for the timeframe. I’m looking at 30 days. So in this case, I’ve got about $29K of spent a little past 30 days.

35:33

Can start looking at things such as idle spend which is always you know, you don’t want to waste the money, but you may not have a choice in some case, right, So and then I’ve got a managing about $8000 per month in reservations spend on this stuff Those reservations are saving me in this 1.25 K, So about $1200 that are being saved by my reservation So Then I’ve also got about $3000 in spots. So all of these things come together, right? If you start looking at How do I manage and save money? All these things kind of come together.

36:07

Combination of your savings strategies come down to if you can spot the cheapest thing? If you can’t you spot, the next cheapest thing is turning something off? And if it’s something that can’t be turned off, then reservations or some sort of savings plan, adoption is a way to really go.

36:25

There are certain things, so as you talk about, and you look at, I have a reservation potential chart over here, which is really showing me, if, I’m not going to go into the details of reservations completely here. But this is showing you that there’s a certain commit level that you can make reservations. Where it becomes favorable in your case. In this case, it’s right around this low peak here, which would be kind of, a lot of people might refer to this as water line.

36:56

And if I go too far above that, my savings is, I start to waste that reservation, so to speak. I start to have reservations that’s not being used. If I go too far below that, my coverage is not as high as it could potentially be, right, so I have this water line. And in this case, we’re, doing pretty well. From my perspective, just looking at this at a glance is that I’ve covered up to about my waterline.

37:22

I probably could go up a little bit higher, and still have a good savings plan, but this looks healthy to me, at a quick, at a glance type of scenario, and the ability to drill into the different machines, within this environment.

37:36

So, I’ll let you know, if you guys use tagging, which, I’m sure those of you that have said you have a team in place, I’m sure you have all your machines tagged, We do, basically, you use that to help with hierarchies, and being able to translate the data into way that makes sense to your organization.

37:56

And you can drill into, drill through, into the resource detail to understand what’s going on with this. So, I just kind of picked us at random, right. So, I’ve got an Amazon EC two instance. This is not a spot instance. It would have been flagged, and it’s showing me when I’m spending on this, my daily spend on this relatively small machine, $40 per day. It does add up, as you probably can see. Very quickly, I do look at, as I mentioned, we have the CPU utilization data, and this is going to be an average. Over the time, I can drill into it, try to change my timeframe and look at more just a single day, But these are average over time.

38:32

But I look at I’m probably maybe a little oversized on this if I look at this. I’m my CPU.

38:39

My max CPUs averaging just over 50%, so, you know, if I were to trend line, in my, you know, average, CPU utilization is quite a bit lower than that. I also do, as I mentioned, we do provide cost and performance metrics, right. So, a lot of what we’re trying to do is help somebody drill into this data. They can look at, the details and say, you know what? I need to, I need to further look at, this machine, all my parameters are under utilized.

39:13

Know, for most part, maybe there’s an opportunity for me to right size this machine and say, we’re trying to provide Because a lot of times, we’ll see, you will hear people say, You know what? I saw the Mac CPU on this machine, looking at a one hour dataverse, or five hour data, or five, or five minute data.

39:30

Our max CPU for this machine may have been, you know, 25% and then they drill in. And then they go back to the engineer and say, hey, let’s, write size this machine that in the engineer very quickly comes back and says, you know what? Well, were maxed out on network on that machine, or next maxed out on memory. So we’re trying to provide enough detail here for a Cloud optimization, or FinOps professional, to make a recommendation and have an intelligent conversation with their engineering counterpart as opposed to just say, hey, we think that’s too big. So these are pretty, very powerful metrics to try to figure that out.

40:08

OK, I’m going to go back kind of into some additional Views that we have in the data.

40:13

So from a spend perspective, we’re all about trying to help you realize your costs and understand where do I want to drill into and try to understand? What’s going on?

40:23

So if I look at this top, this is one of my top spends here is this very first resource.

40:30

I’ve got some peak time on this yet. So this looks pretty well balanced from my perspective. I’ve got some idle time. I’ve got a lot of green. So it’s active and it’s being used. I start looking at, potentially, this guy right here.

40:44

So I’m roughly half screen half idle. So that’s some something I probably want to check in to know. I start getting down into some of these guys, and, very quickly, I can see both potential opportunity from a sales perspective, as well as do I want to spend time on something that has this much. You know, roughly 80% is active, and very small percent idle.

41:08

So, we’re trying to help you very quickly identify opportunities for improvement and also identify if is my environment healthy. We do have a projected spend here, which is kind of interesting. As you look at it, you were starting to help folks understand what that spend is going to be like, and predicting that out.

41:26

So we do, you know, it’s, um, not as detailed. Is as a true forecasting situation is, but it will give you some directionality as far as where you’re going to see your spend here over the next the next month or so. right.

41:42

And then resource utilization.

41:43

This is getting more detailed into the ability to drill into and understand the health of your system. And again, different scenarios here size back into potentially, you know, if you looked at that that initial water line or the potential savings.

42:03

Scenario allows, will map fairly closely that because the reality is I’m showing the potential savings from a reservation perspective and dollars reservations For those of you familiar, aren’t in ours, right? So, you’re looking at CPU hours, basically it is. And, so, that first level metric is very high level. You want to start drilling into more details, to understand things, such as utilization here and being, and we’ll get into that.

42:28

I’m going to shift gears here a little bit into rate optimization. This is, you consider this kind of a preview. It’s a product. We’re getting close to releasing the views and the rate optimization.

42:39

This is telling me my overall health of my reservations, just a quick status I should say, of the reservations, and I can drill into this reservations, we will have savings plans here in the very near future. will be available from that perspective.

42:57

When we talk about reservations, what are the things I didn’t point out, we use reservations frilly generically, so in this dataset I have both Amazon and Microsoft Azure, you know, we will be adding Google in the near future, But so we are multi cloud, you can filter on all of them, right, so you can, drill in and look at combined, or you can look at, you, know, just 1, 1 provider, if you want to.

43:25

OK, so, I’m going to drill into reservations and spent in here.

43:29

Two main metrics when you’re looking at reservations are really the utilization, the coverage rates, and, know, without context potentially, of, that the initial graph that we saw, the waterline graph.

43:46

This may look a little wonky but in this case, we’re above 70%.

43:51

I think, you know, industry best practice, It’s probably higher than 80%, but we do have a pretty variable demand in this demo data. So, being above 70 presents, not necessarily a bad thing. And if you do a trend line, we’re probably above 80%, on that, from a timeline perspective. So, you’re going to have some fluctuation that data coverage, were potentially like compared to some companies, when you look at this, at being, you know, maybe 25% coverage, depending on, on the variability. But we do have a highly variable demand pattern for this, environment. And that’s where you’ve got a balance between spot in reservations.

44:29

And try to make sure you’re using the correct types of incorrect data. Or correct metrics, it’s not just one single metric that goes into making these decisions.

44:40

And we’re trying to bring together, you know, the deal balanced scorecard scenario, I go into the reservation detail for specific reservation and see more details as far as that.

44:51

And you really, again, work towards understanding the health of that environment.

44:58

So, we do point out utilization, breakeven days. That’s a pretty key metric when you’re deciding on a reservation was to break even timeframe. And just a quick, quick way to understand is this makes sense Reservation savings in breakeven really tons of play out more when you’re doing a prepay scenario. And is it makes sense for you to do your own reservation seconds? Again, this is the single reservation that we’re looking at.

45:25

We have the ROI and metrics here, as well, as actual savings, and projected savings.

45:30

And if you’re, utilization of reservation isn’t high, you’re going to have low actual savings as you’re seeing in this scenario, which is, partly because of that, again, that variability in demand.

45:44

So, OK. I’m actually going to shift gears now and head back to the presentation and close out.

45:51

And we’re going to open it up for Q&A here in after about 2 or 3 slides.

46:10

One of the things that we do from Senturus perspective and is our heritage in developing BI solutions, whether it’s a data lake data warehouse pulling in data from multiple systems of record. That’s our heritage. So we do have our product. We just showed you the Envisor Cloud Analytics, which is available out of the box, which will give you data warehouse. You can augment if you need help with that. So, we can augment beyond that and also incorporate additional data. So, if you’re in a scenario, if you’re back to that previous slide, where you don’t have the resources to do this, but you anticipate that you do want to have the flexibility, you could leverage our product. We do you start adding value on day one. We do spin up our system in less than 24 hours, and have access to a data warehouse for cloud cost optimization. Try that on your own.

47:07

It’s unprecedented to have that. So you have that data warehouse available in 24 hours. We can augment that over time as it makes sense as well.

47:16

So, with that said, I’m going to jump ahead two more slides.

47:23

We are offering a free assessment with your own data. So, if you guys have interest in understanding or learning more about our product, it takes about 15 minutes to connect our system to your data.

47:40

There’s no download or anything. It’s just purely a quick connection to your environment. Will start bringing the system, the data into our system, and within 24 hours, we can set up time with you and review your cloud KPIs. We are happy to do that for you.

48:17

If you are using reservations, we can help you see the power of the flexibility of using a data warehouse, as if you’re not using one today, but you should be able to see the power using a data warehouse instead of using a rigid inflexible tool.

48:38

So if you do need help beyond what, we offer, Senturus, as long heritage of doing that. So we can help pull that data from multiple systems, help you with your report construction, as well as integrating other data sources.

48:52

So, with that said, I’m actually going to turn this back over to Steve, and we’re going to close out and open up for questions.

49:04

So anybody who has questions, you can type those into the questions panel. While I’m talking here Just a little bit of a quick overview, on Senturus we do have a ton of additional resources available to you on our website. So by all means, go over there and take a look at our Knowledge Center. We’ve been committed to sharing our BI expertise for over two decades now, so go to senturus.com/resources for lots of valuable information.

49:37

We also have another upcoming webinar on November 17th, KPIs from disparate data sources in minutes. We’ll actually be having a guest Senturus presenting that day and my client will be hosting the webinar along with me. And we hope to see you there on November 17th.

49:58

And just a quick background on Senturus. We’ve been in the BI business for over 20 years.

50:04

You do concentrate on modernizations and migrations across the entire stack and across multiple edge that we provide a full spectrum of services, including Power BI, Cognos Tableau, Python, and Azure. In addition to proprietary solutions, so we’re happy to help you out with anything you may need on the business analytics side. We do really shine in hybrid BI environments, which, of course, are very common today.

50:32

We know many of you already, we’ve been in business over 20 years, over 1300 clients, and over 3000 projects. Strong history of success here.

50:53

We are hiring.

50:54

So, if you’re interested in joining the Senturus team, we’re currently looking for a fin ops consultant, as well as a Microsoft Power BI consultant. So, if you think you might fit the bill for that, you can send us your resume at [email protected], You can also find the job descriptions on the website.

51:15

Yeah. And I will say, I did sell actually jump to this page because of the fin ops consultant that or consult. We actually have a couple of openings and if an fin ops area. So, would love if you guys have any interested in the fin ops in helping the Envisor team and our practice, we’d love to hear from you.

51:38

Thanks, Keith. And with that, we’re going to go ahead and open up for Q and A. Scott Felton just posted a note to the chat window. If you are interested in learning more about fin ops data solutions or anything related to Senturus, you can grab some time on Scott’s calendar and there’s a link there.

52:01

We do have one question here, Keith from Alex, Asking, whether we use AWS cloud, and, or Azure cost management data as the data we ingest, or do we use other data APIs? And, I think, Bob Looney our VP of software might be most suited answering this by, I don’t mean to put you on the spot.

52:35

We definitely use the API driven data access. You can actually get at some of those things, both ways, But we use a blend of operational and financial data coming into the system, so that we can show both the spend and the utilization.

52:54

Can we also ingest logs into the BI reports?

52:57

It’d be outside of our data warehouse, but absolutely. That’s one of the reasons we’ve gone to this sort of solution, so that you can bring in all sorts of these other data metrics and blend with your other organizational data.

53:12

You know, things from website hits to your financials, to your number of engineers on staff to get some more meaningful metrics to those forecast width as well as report on.

53:29

Thanks, Bob. You know, one other thing, and this isn’t really a question, but more of just a comment on my part about one of the things that I find really useful in the Cloud Analytics Interface, isn’t this opportunity for identifying right sizing options for servers. So, one thing, for anybody who has spent a lot of time, and AWS Cost Explorer, or Azure Customer Management, You probably know there are tools in there to help you. kind of identify options for reservations and savings plans and that kind of thing. But what the providers don’t really do is tell you, hey, maybe you just need a smaller server, rather than reason, for it for a long period of time.

54:09

So, personally, I think that’s a great benefit here. Just because there are often cases where you have a server that is way underutilized and maybe the answer and institutionalize that to be a smaller server rather than committing to running it for a certain amount of time under a reservation to get a discount.

54:28

So I find that that really useful.

54:31

Also, I pity anybody who has ever had to look at the line item detail on an Azure or AWS invoice.

54:38

I personally don’t use Google Cloud, so I don’t know how Google does it, but I know what a nightmare it is to try to make sense out of those invoices, to try to translate that, into meaningful metrics for your organization, to figure out the best path forward.

54:55

So being able to ingest and normalize the data directly from the clouds rather than trying to make sense out of invoices, and, or just making sense out of the different formats that things are presented.

55:07

And depending on whether you’re using the AWS tool for evaluating costs versus the Azure tool versus other clouds. So just having all that normalized from all the clouds is really beneficial.

55:26

And with that, do we have any other last questions here before we wrap up?

55:37

A question from Peter.

55:41

We replaced old-school, temporary store stage with data lake.

55:47

Dirty data sticking around forever Is a balanced by benefits of flexibility to scale up and down so Peter.

55:55

I’m not sure that I understand question.

56:03

Is it about scaling up and down the database?

56:10

I don’t know if you can answer in the Chat window there.

56:17

Mean, maybe a better way to pose the question that, Bob, I’m not sure to address.

56:24

But let’s give him a moment to respond, and there’s one other question here that that’s, That I’ll take very quickly here.

56:33

It’s what other data, DC organizations blending with cloud spend to create useful metrics. And so, one of the most common things that we see, and we’re asked about, is, being able to bring in sales data and engineering hours.

56:49

And being able to then, translate that back into the, effectively a cost to serve on both sides, right? So, being able to come back and say, the marginal cost of sort of client is X, the cost of, you know, that the engineering team is, also, another thing, right. Especially in larger organizations that have multiple engineering teams. and being able to benchmark across the various organizations, and, you know, depending on your company’s philosophy. I mean, we have, you know, Google talks about cloud caught optimization and wants it to be, you know, this blameless concept.

57:23

Other companies want a very publicly display who’s the best. And so, you, however, you want to shape that the tool gives you the flexibility. But that’s those are kind of the most common things that we see people trying to bring into the system.

57:43

OK, so we have a rephrase question here this Yeah, so Peter is saying we have a lot more duties within data now than before data lakes, and is that compensated?

57:55

Other benefits of scaling cloud resources.

58:02

And to be honest, I don’t know how data cost upscaling of the data costs works. I personally don’t deal with directly with that, I don’t know if Bob, if you have any insight.

58:16

But, maybe we can take that one offline and follow up directly since we’re at the top of the hour.

58:38

And with that, Keith, while you go ahead and advance to the final slide, just a quick thank you to everybody for attending today’s webinar.

58:47

We do appreciate all of you being here.

58:56

You can contact us by phone. You can also contact us by email. And, as I said, there’s a link, there, in the chat window, where you can set up a meeting with Scott Felton, if you’d like to discuss Envisor Cloud Analytics or really anything related to your business analytics environments.