In this on-demand webinar, learn the different ways Power BI lets you source and prep data for analysis. We examine four different scenarios you should consider that span desktop to enterprise use. We review how they serve different analytics and reporting needs and discuss the pros and cons of each approach, including their impact on scalability, security and performance.

What method you choose depends on the type of analytics you want to perform, the ease-of-use factor for your end users, and your organization’s need for data security, reporting performance and data integrity. Learn which of these approaches is best suited to your organization’s requirements:

- Directly querying operational systems

- Querying the data warehouse

- Dataflows

- Datasets

Learn the best way to marry your data to Power BI.

Presenter

Michael Weinhauer

Director

Senturus, Inc.

Michael’s responsible for the development of the Senturus Analytics Connector, which lets Tableau and Power BI use Cognos as a data source. He has been designing, delivering and selling analytics solutions for over 20 years. Before Senturus, Michael held positions at Oracle, IBM and SAP, acquiring a wealth of hands-on, practical BI and Big Data experience.

Read moreQuestions log

Q: My organization is interested in using Power BI Premium, but I’m not, would you recommend using Premium?

A: Pro and Premium tiers are designed for enterprises looking to use paginated reports, share content outside their organization or require enhanced administration tools like isolation on dedicated capacity or regional targeting, Read more: https://senturus.com/blog/power-bi-premium-demystified/.

Q: How and where do I specify a query composite type?

A: The storage type is set at the query level from a dropdown menu found in the properties for a given query (table/view) in the modeling section of a Power BI file. Read more: https://docs.microsoft.com/en-us/power-bi/desktop-storage-mode.

Q: Power BI has the prepare data capability, is it considered to be an ETL tool as well?

A: Power BI is recognized as having self-service ETL capabilities. Power BI’s data preparation capabilities are Power Query (Get Data and Edit Queries) which allow for transformation of data. The second step is modeling the data, which occurs in the modeling section of a Power BI file. Dataflows use Power Query in the cloud and will always import the data, so they are very much akin to an ETL process. Queries created in Power BI files only pull the data into Power BI (i.e., ETL the data) if import or dual is selected.

Q: If I import an Excel spreadsheet does it auto map the columns?

A: Power BI will attempt to map the columns properly, by doing things like promoting headers and converting some data types. Depending on your source file, these transformations may need to be changed and/or augmented.

Q: Can Power BI connect to data models created using Planning Analytics? If I have some cubes created using Planning Analytics (formerly TM1) can I use those cubes as my data source in Power BI?

A: Senturus offers an Analytics Connector that allows using TM1 cubes via Cognos Analytics in Power BI (or Tableau). Learn more, request a trial or to purchase: https://senturus.com/products/analytics-connector/cognos-powerbi/.

Q: How does this differ from MicroStrategy?

A: MicroStrategy has its roots in the traditional BI vendor landscape but has done a nice job of morphing its technology over time into a more agile, Gartner Mode 2 stack. A complete comparison is outside of the scope of this webinar, but with MicroStrategy Desktop for example, you can do many of the things you would do in Power BI Desktop.

Q: I have created a table in my SQL database, it has around 80 million records, it takes ages to refresh, can you advise us on how we can speed up the refresh?

A: There may be several reasons for your issues. The first could be the general performance of the source database. In addition, many transformations implemented on the data may not be query folding back to the source system. The underlying source system may need to be optimized by creating views (to implement query folding) and/or index creation to improve performance. If you want further assistance, please call us at 888 601 6010, ext. 1 and we can help troubleshoot your issues.

Q: Can I use import for some source data and Direct Query for other source data?

A: Yes, you can. It depends on the data source and what you are doing to the data in your transformation and modeling. Learn more about composite model: https://docs.microsoft.com/en-us/power-bi/desktop-composite-models and DirectQuery + aggregates: https://docs.microsoft.com/en-us/power-bi/desktop-aggregations.

Q: Can I turn off the import function for SSAS cubes to prevent users from manipulating a governed data source?

A: Not that we are aware of.

Q: Are dataflows only in the cloud?

A: Yes. Dataflows are Power Query in the cloud (powerbi.com) and they always import the data into Azure Data Lake Gen 2 Storage (ADLG2). Learn more about dataflows: https://docs.microsoft.com/en-us/power-bi/service-dataflows-azure-data-lake-integration and see a list of the advantages of doing so: https://powerbi.microsoft.com/en-us/blog/power-bi-dataflows-and-azure-data-lake-storage-gen2-integration-preview/.

Q: Do we need a subscription to use Azure ADLG2 or does it come with a Premium license?

A: It comes with the Premium license and there are additional sub-tiers for customization based on your organization’s needs.

Q: Can a dataflow import into a Power BI dataset?

A: Yes, dataflows can be used in the creation of a dataset. It requires using Power BI Desktop to source the dataflow, then publishing the dataset to Power BI service. Learn more: https://docs.microsoft.com/en-us/power-bi/service-dataflows-create-use#connect-to-your-dataflow-in-power-bi-desktop.

Q: Are dataflows sufficient as the semantic layer to my data, as compared to something like Cognos Framework Manager?

A: Dataflows allow for the transformation and movement of data into Azure. It does not allow for modeling, which needs to be done in Power BI Desktop. To complete the cycle, you need to publish to a dataset on Power BI service. Datasets are complete data models ready for analysis, which is like a published Framework Manager model. Keep in mind, FM models don’t support transformations, so transformations occur upstream (before) the FM model in a Cognos environment.

Q: I have a database with four tables summing up to 1.4 terabytes, what connection type method should I use to report from that?

A: Power BI should compress this data 10-100 times depending on the cardinality, it would be much smaller if only specific columns are required and much smaller if aggregated at the level required for analysis. If you are summarizing this data at a high level, you have more flexibility. Keep in mind Power BI can only return 1 million records in DirectQuery, so you would likely need to summarize this data anyway. You can also take advantage of Power BI’s aggregate management capabilities to support big data use cases. Learn more about limitations: https://docs.microsoft.com/en-us/power-bi/service-get-data#considerations-and-limitations

Q: Is there a way to change the data source for a dataset from one workspace and dataflow to a different workspace and dataflow without having to go through each query in a dataset? We want to change the data source for SQL from one server/environment to another. We are trying to create separate dev/prod environments and have come across issues with re-pointing datasets to be connected to different workspaces and dataflows.

A: A referenced query can be used for this functionality. You would point to workspace A, dataflow 1 in the parent query and all child queries referencing the parent query. You would then be able to change the parent query to workspace B, dataflow 2 as required. Learn more: https://docs.microsoft.com/en-us/power-bi/guidance/power-query-referenced-queries.

Q: Is there going to be another webinar to review options that are available with Premium licensing?

A: We’re considering it, but it’s not on our immediate docket.

Q: Are dataflows replacing data warehouses?

A: Dataflows are used to create entities (like Time, Customer) and can begin to supplant some of the functionality of enterprise data warehouses (EDW). However, a properly designed EDW still provides important capabilities like hierarchy management, slowly changing dimension, late arriving fact and other support not found in dataflows. Dataflows are part of a larger enterprise data strategy that may include an EDW. Remember, an EDW can take on many forms, from simple to very sophisticated.

Q: When dataflows are refreshed is the data cached and stored at ADLSGen2? And can datasets be made to have a live query to the dataflow?

A: Yes, the data from dataflows is always pulled into ADLSG2. Datasets can use dataflows as sources. DirectQuery to dataflows is coming in the near future with the public preview scheduled for April 2020. Learn more: https://docs.microsoft.com/en-us/power-platform-release-plan/2020wave1/business-intelligence/dataflows-directquery.

Q: Does Senturus provide Power BI UX and UI services?

A: Yes, we absolutely do – please contact us at us at 888 601 6010, ext. 1 or [email protected]

Machine transcript

0:20

I will be covering four different ways to source and prepare your data.

0:31

So first a couple of housekeeping items before we get into the heart of the presentation, you have the GoToWebinar control panel up you can restore and minimize it using the orange arrows and while everyone’s microphone is muted. So everyone can hear the presentation. We strongly encourage you to submit any questions you might have via the question pane in the control panel.

0:56

We generally try to answer the questions live during the presentation if for some reason we aren’t able to do that, we will complete a question log and we post it at Senturus.come along with the deck and a recording of the presentation which brings me naturally to the next slide. Everyone always asks frequently and throughout the presentation. Can I get a copy of the deck and the answer is an unequivocal yes. It is available on Senturus.com resources. The URL is on the screen there.

1:31

While you’re there, you might as well bookmark it because it has a ton of great other assets there. So you’ll find the recording there and the question log to the extent that we need to publish that. After a quick introduction. I’ll walk you through an overview of Power BI, will talk about the different query methods in some of the trade-offs and considerations and along with demonstrations as well as sort of where you fall in the BI maturity curve.

2:01

And kind of high-level use cases and then we’ll cover some will do a quick Senturus overview for those of you who are unfamiliar, but we encourage you to please stick around for the full duration as we give you some great additional almost entirely free resources at the end and we cover the Q&A at the end of the presentation. So by way of introduction, my name is Mike Weinhauer how I am a director here at Senturus. I’ve been building selling

2:31

and designing analytics solutions for the entirety of my 25 plus year career the last five plus of which have been spent with Senturus and I’ve worked with Cognos and Oracle as well as Tableau and Power BI so I know enough to hopefully to be dangerous and impart a little knowledge here before we jump into the presentation. We always like to get our finger on the pulse of our attendees in our audience.

3:01

And the first poll I’d like to launch here is ask whether your organization is currently using Power BI premium and pretty straightforward. Yes. No, or you don’t know so you basically got the free version. If you’re just using the desktop your Power BI Pro just kind of the next tier up and then Power BI premium is the top tier with dedicated capacity and greater capabilities.

3:36

75% here okay, great and I’ll share this so what about 50% are not using Power BI premium. Third of you are and 20% don’t know so thank you very much for that. And then we have a second poll today. I’m asking how do you connect to Power BI data currently and this is a select all that apply. So do you import the data in other words you doing flat file dumps from?

4:13

The system and pulling those in or are you importing from your data source. Are you doing direct queries to a transactional or operational system? Are you querying a data warehouse or SQL Server analysis services in some form are using data flows and or data sets.

4:32

So get those votes in there or at about 50% I’ll give it a few more seconds here.

4:53

All right. So it looks like full almost two-thirds of you do import that’s interesting. Although Imports can take on a lot of different flavors. I’d be curious how many of those are flat file exports and how much of those are importing from one of the other sources we mentioned then you know half or so are doing direct query to some transactional system. That’s interesting. I’m about half are doing data warehouse, SSAS 20% using data flow.

5:20

So that’s kind of low, although not entirely surprising and about half of you are using datasets great. So thank you.

5:29

That’s always interesting to see and hopefully interesting for you to see what your colleagues here on the call are doing so first a little bit of background in terms of Power BI for those of you who may be unfamiliar with the tool and the platform. It kind of comes out of the rise of self-service and we’re following a journey, perhaps of a young eager analyst and a bright person there.

6:00

They wash their hands frequently with warm soap and water and don’t hoard toilet paper and get gouged for hand sanitizer and hopefully they’re not watching the stock market today, but they have business data sitting in a bunch of flat files and they don’t want to bother it with any of this yet because of various reasons things like resources politics are competence and there’s a lot to sort of figure out and they want to figure out how to: are the data how to potentially store this data landed somewhere how to model it and how do I create my reports and visualizations off of it? And ultimately, how do I share that? And then on top of that, how is that going to perform adequately and then the linchpin here is how do I do all that myself and can one tool really do all that. This used to be a very disjointed process wherein at least part of this was owned by 8 IT people.

6:53

And the on the on the back side here, perhaps ETL and landing of the data modeling was yet, another function the visualization was still another and generally sort of the deployment fell to maybe another person so you can kind of have these walls in place over here and that’s what led to the rise of tools like Power BI and without going through this entire chart. This kind of gives you a brief history of or rather.

7:23

a comprehensive history of all the Microsoft BI offerings. Fast forward to 2010 here.

7:30

You see three tools come into play Power Quake power query, Power Pivot and Power view giving you the ability to prepare data to do self-service through Power query visual a store in model and do some self-service here in Power Pivot and then Power view giving you some ability to do reports and dashboards and then in 2012 we saw Microsoft come out with analysis services tabular, which is their version of a columnar in-memory data store. So very scalable, high performance data store and Power BI came out in 2015 that really has overtime integrated all of those four components into the desktop tool.

8:17

So in other words one tool can sort of do it all and that’s on that integration is only gotten tighter over time and It’s and it spans beyond just the platform to the Power BI service or powerbi.com as we call it sometimes into other and other areas like visual studio and the Power Platform and Azure. So what is Power BI in a nutshell? It’s a self-service analytics solution that allows you to prepare and present data in your organization’s and it provides those five essential functions. It provides a query engine that provides an in-memory data storage repository.

8:50

It allows you to do modeling provides a tool and a deployment portal and it’s really a set of integrated tools ranging from desktop to Power BI service report server or mobile app Power BI embedded and data gateways and Excel and I’d argue really there are six functions that it covers here because whereas those walls existed before between the data modeling and the or the ETL rattle rather and the data modeling and visualization. These tools really have broken that down and enable.

9:23

Able this iterative capability where you can prototype rapidly in a tool like this iron out the kinks get your calculations and your business logic and everything and then push those Upstream for greater levels of reuse. So there’s another really arrow in there that you could argue as a sixth concept. This one gives you we’re going to use this diagram heavily in this presentation and apologize.

9:46

it’s a little bit of an eye chart, but in terms of the core concepts architecture and flow, we’re going to start with Power BI desktop which consists of connectors that allow you to connect to multiple different types of data sources and virtually any conceivable type of data source, and you can create queries that define how to extract and transform your data sources access via those connectors and modeling capability that allows you to dtefine tables relationships columns and measures that you subsequently use in report and reports that present data from those data sets on one or more pages of visuals.

10:23

Now keep in mind that your data set in Power BI desktop might just be the model and you could be querying this live or you do have the ability to import into a data set. That is a Power BI tabular model within built into Power BI desktop. Then the workflow is generally to publish content out to powerBI.com aka the Power BI service.

10:54

And that consists of a number of different features and some of which will cover today. So you have going from left to right you have data flows which will cover and they pull data from sources actually perform transforms and load them into Azure, which is Microsoft’s Cloud offering and it’s called Azure data lake gen2. So now my terminology there is leave it to Microsoft to come up with a mouthful.

11:16

We’ll just call that as your data Lake tend to or a DLG to on top of that you can publish either from Desktop or create and powerBI.com against data flows or data sources data sets which are published analysis ready models that are reusable and certifiable and we’re going to cover those as one of our one of our methods and dashboards. Of course reports. Same thing as here there data that could present in from data sets on one or more pages of visuals dashboards allow you to present visuals that get pin from one or more reports on powerBI.com.

11:52

And then the last component our on premise data gateways so you can connect to Cloud sources or you can connect to on premise data sources leveraging what they call the on-premises data Gateway. So we’ll refer to this diagram throughout now.

12:09

There’s always when you’re when you’re thinking of a method and as we went from writing a Blog on this to formalizing it in a in a webinar it became clear that the four ways really could be really became sort of 5 and could be 6 or more and it really kind of is a spectrum here. So we try to touch upon the main ways and a couple permutations and the method that you land on will always be kind of the sum or the net of various trade-offs and considerations. So you’ll have various needs based upon your use case for your environment or certain limitations you have. So maybe you have a real-time scenario or you know, maybe latency is not so important.

12:54

Do you have a need to Only store that data once or is it okay to have another copy of it is the data that you’re that you’re reporting against is an analysis ready, or is it more transactional operational in nature? What are your cost constraints? What are your performance requirements? How much is this need to scale? It? Does your data reside on Prem or in the cloud or does your solution need to reside on Prem or in the cloud?

13:16

Do you have a governance requirement or as agility the order of the day and of course security and that’s just I’d say maybe comprehensive but it’s a kind of a short list here. So there’s always trade-offs and considerations and that’s going to drive what you end up choosing and those will change over time. And then before we get into the methods here real quickly. The Microsoft uses some definitions for their various query methods and for purposes of this webinar.

13:45

I’m generally referring to the query as it is executed by Power BI desktop because these terms are used in different places in the Microsoft stack. So when I’m when I’m talking about I’ll try to clarify that but direct query refers to a live query to data that is modeled in Power BI and that is separate and distinct from a live query which is alive query from Power BI to pre model data. So it with a direct query the model lives in Power BI with a live query the models the model.

14:23

Live somewhere else. It’s either a data set or it’s been modeled in SQL Server analysis services, for example, so those are the two main ways you connect via live. So when you see that import is when you’re pulling that data from The Source into a Power BI data model into a tabular model that’s used by Power BI and then the last one here is sort of a hybrid. It’s a composite or dual where aggregate data gets pulled into an import and then the query engine uses intelligence at runtime.

14:53

Time to determine if it can fulfill the query via the import or if it’s going to use direct query. All right, so hopefully that terminology that background gives you a little Foundation to work with so, all right. So we need an option 1 here and this is the direct connection to transactional data. Now you are here in the diagram, right you’re operating in Power BI desktop.

15:18

So this is kind of the you may be new to Power BI or you’re doing a prototype or you have a real-time data need and the keys to this one are that transactional systems involve very large generally very complex schemas. They’re optimized for data input like taking orders and Manufacturing runs and things of that nature. They are not optimized for query performance Vector sort of diametrically opposed to it.

15:48

Then third normal form you have orders in in our demonstration at least orders isn’t Tables and products exists in three and the challenges arise here are that are that while it’s quick and dirty and you can get to it easily and get started quickly and maybe cheaply there’s a lot of modeling that it relies upon and your transactional data doesn’t have things like a Time Dimension or a Geo Dimension. There’s a lack of history and change capture.

16:13

There’s no hierarchies and it generally won’t have all of the data or doesn’t always have all of the data that you need so you it’s heavy on transformation in modeling. You have a low reuse especially across different tool sets. Right if you use other tools in your organization, but it’s great because your data is really fresh and it’s good for operational purpose and it doesn’t require you moving data. So what does that look like if I drill into it a little more while you’re either doing a direct connect or an import from the transactional system into Power BI desktop or in a lot of cases.

16:50

We see people actually creating reports or Flat file or Excel dumps out of that transactional system to be pulled into Power BI desktop. So let’s take a look at what that might look like.

17:03

And this is kind of the longest demo of all of them because we’re not presuming a whole ton of knowledge with Power BI so I want to give you a feel for what this is like, so I’m using the Microsoft adventure works data set so it’s a sample company and In this instance, I have about 70 tables in this ERP system and it’s very deals third normal form and all that good stuff and there’s about 20 different views in here. So I’ve studied this fortunately. So if you have the benefit of SQL Server management Studio or something of that nature, then you’re able to sort of figure out what’s going on with that schema and go to try to report off of it. So my first step here is to go and connect to my database.

17:52

And I’m going to connect to my SQL server database. I’m running this all locally for convenience and you’ll see my data connectivity mode. I have the ability to import that or I can do a direct query and I’m going to connect it’s going to bring up my available databases. And the first one I have here is my second one I have here is my oil TP schema and you can see here.

18:18

This is this would be sort of tricky to figure out potentially for see who is new to this and this is a small one modern ERP systems have upwards of 30,000 tables. So you can imagine what that would be like, you’re not even going to be able to browse those. And so what I’m going to do is a basic analysis and all these in the interest of time. I’m going to pull in three different tables. I’m going to pull in some sales order detail information. I’m going to pull in customer information and I’m going to pull in product information and what I want to do because this is again a transactional system.

18:51

I need to model this in such a way that Analysis friendly. I’m going to click transform data and what this is going to do is it’s going to issue some queries to bring me some preview data and bring me into Power query editor and this is where I do my transforms and you can see this is a very powerful environment allows me to do a lot of modeling the data like removing columns removing Rose removing nulls removing duplicates. I can fill data I can pivot data there’s a ton of things I can do here.

19:19

So bear with me while I do a little bit of modeling here, and I’m going to for starters. My person address in this case is going to become my customers View. And what I’m going to do is Power BI does a nice job here of providing references to tables that it’s linked to through referential integrity.

19:39

And so what I can do is sort of get rid of the ones I don’t want and I don’t have to explicitly pull those into my model and I can just use this little expand icon and it’s going to let me go out and I can pick The tables that and columns That I Want from that table, so I’m going to expand that out. So now my view contains my products table it contains or sorry, it contains my address and it contains some of my state information. So I’ve taken out columns that I don’t want. I’m going to rename this to state name.

20:22

And then my sales territory is snowflake doubt to that table. So I’m actually going to pull in that information.

20:30

So I get my region name.

20:41

And I’m good to go. Okay, so I’m good with my sales. I’m going to do the same thing with my product table here.

20:49

Call that products and my product tables there’s about 20 or 30 tables. And what I want to do here for purposes of my analysis is I really want my product hierarchy. I want my product subcategory information and I want my product category information. So I’m going to delete all these other references that I don’t really want.

21:11

The kind of clean up this analysis and then I’m going to expand the product information to include the product subcategory and I’m going to include this link out to the product category. So that’s going to expand out.

21:29

I’m going to rename this name column to product subcategory.

21:40

Right of this guy and then I’m going to expand out my Master on my product category and then I’m going to rename the product category.

21:58

Great. So now I have all my product information. And then lastly I am going to put in my sales orders. So I’m renaming this and I’m going to expand sales orders to include my sales order header.

22:15

So I’m kind of normalizing this information and using those table references to minimize the unify those into a single View and to make this sort of a nice little mini star schema if you will.

22:32

Okay, great. So now this has this has all my sales metric information as a bunch of good information and I’m more or less ready to start my analysis. And then the other thing I might do here is remembering that my table here. My data does not have a Time Dimension. So right now I’ve really only got order date and while Power BI does have some time intelligence. You may want to actually create some more.

22:58

Create some specific columns that do that so I can add in a custom column here for example, and I might want to enter in I can call it.

23:09

Order here.

23:11

And here I’m going too actually it gives me a little.

23:16

Calculation viewer so I can do year and you’ll see I get some type ahead functionality and then when I put that in there it’s going to expect as an input one of the columns and I can add in my order date and you’ll tell me no syntax errors have been created and then I get an order you’re calling that’s going to extract the year out that I can use in my analysis. Okay.

23:37

So once I’ve done that and so you can imagine I go through this exercise and I pull in more and more tables and I do more and more modeling here, but you can tell this takes Little Bit of time and a little Bit of effort. So once I pull that in it takes me back and closes power query takes me back into Power BI desktop and I now have my nice three little views here, which I now have to relate to each other. So I’m going to take my product ID from my products View and I’m going to go ahead and join that up.

24:12

Give me a little preview make sure that that looks the relationship and the cardinality looks right and then I want to join my address too. I’m going to use my ship to address ID here too. So I’ll be reporting off the customer information will be based upon who I ship that information to. So I make sure the cardinalities correct that relationship looks good and then a couple other things here.

24:36

I want to go through and my line total is a decimal number but I want it to be formatted to be a currency.

24:57

And I want it to be two decimal places. So these are the sort of things again that you have to do against transactional systems where that isn’t handled for you but I can change that in my model. And then finally I’m ready to go ahead and create my visualization so I can go out here and I’m just going to create some basic visualizations here in the interest of time so I can create a hierarchy. So for example, my product category hierarchy and it automatically creates this visualization. I’m going to change that to me.

25:27

It’s View and then I’m going to go down to my sales orders. I’m going to grab my new order year column and I’m going to throw that in the columns and then I am going to throw my line total into the values and there you go. So you can see I’m sure to get some data back. I can drill up and drill down on this and if I wanted to a geographic analysis of my customers and where they’re buying that stuff. I can go State province.

25:57

Code and drop that on country region code to create another hierarchy and then I can throw that into my visualization. It’s going to pull up a map.

26:05

And to that, I will add my LINE totals.

26:14

Right and the nice thing about this is you know, I can see here’s my numbers for North America for example, and I can drill down on that and see what it looks like from a state level. So I’ve got a pretty nice looking little visualization here, but that took a fair Bit of effort and a fair Bit of time. So hopefully that gives you a sense for what Power BI is capable of or sort of an intro to that and keep in mind now that I can also add new data sources to this, right.

26:40

This is a direct query so allows me to go in if I wanted to pull in more data. I could actually add data to this I could pull in a spreadsheet of information or whatever I wanted to do. So I have that ability with this with this design.

26:59

So In summary the pros to the direct connection are that it’s real time. You don’t have to copy your data it’s relatively low cost to get started or very low cost to get started. It’s pretty agile I can kind of do what I want and environment as you saw it’s very powerful. There’s a lot I can do in this tool perhaps more than about any other tool out there. The cons can be the performance remember that’s a transactional system that you’re running the business on so you could have system impact on that. It does require a fair Bit of effort to get that data.

27:30

Into an analysis ready format and if I’m doing this at the file level remember if I’m in a company of many thousand people and a bunch of people are using Power BI there could be a lot of people spending a lot of time creating these individual files, but hold your judgment on that because we’re going to cover some of the other methods here the and the data is point in time, you know, what of history or training or change data capture as I mentioned before so it’s really ideal for smaller groups or teams real-time use cases and as well as prototypes and so the methods that you have availability available to you to query that are direct queries. I showed you can do an import or you can leverage that composite model. So that’s kind of your beginner or starter level for from maturity level. So now the next option is connecting to an E DW now it’s a better practice and for purposes of this we’re defining it as anything other than directly hitting that transactional system, so it could be a proper EDW.

28:31

And of the ERP or transactional system and ODS at data Lake remember that your mileage level of performance and or modeling that you have to do can vary greatly what I’m going to connect to here in this example is more of a call it a proper EDW. The adventure works EDW. The benefits of any dwr is that they’re really designed for analysis. However, they can still be Big and complex and in the sense of having hundreds or thousands of tables and they won’t always contain all the data you need but it does have history captures.

29:00

Ages and has nice time and Geo dims and hierarchies and should generally perform well for queries, so there’s less transformation modeling required but there is upfront cost in that and then instead of doing the modeling here. You’re doing it out in something like SQL Server integration services. So sorry, I meant to drive in dive into this specific mode. So here your Source systems you’re doing ETL could be SQL Server integration Services could be Informatica.

29:30

Something else you’re landing that in another data source, and then attaching to that via Power BI desktop. Now keep in mind then you’re really kind of pushing that work upstream. So there’s some effort and cost around that but you get the benefits of being able to reuse it and just to give you a quick snapshot of what that looks like. This is visual studio and what the SSIS data flows look like to populate. Even our little Adventure Works Data Warehouse.

29:54

And this is the kind of thing that we help our customers with all the time and just to give you a quick snapshot of what this looks like this is just the sequel that’s used to populate the product dimension in this example. So there’s a whole bunch of those calculations in here. There’s business logic that gets incorporated into this. So this is non-trivial but there’s huge business value to be had in terms of being able to make this analysis ready. So what does that look like from a Power BI perspective?

30:27

So to do that we’re going to create a new file.

30:33

And instead of connecting to my loyalty P system. I have an Enterprise data warehouse that I’m going to connect to.

30:44

So I should have had this open before.

30:53

I’m going to click on my get data.

30:58

What am I database?

31:04

Log in again. I have the option of importer direct query because this is still just a relational database.

31:12

And this time I’m going to pick my adventure works data warehouse and you’ll see it’s organized very differently. I’ve got a handful of views, but then I have about 15 or 20 Express Dimensions that I’m going to pick from and I’m going to go ahead and pick some of those now, I’m going to grab my date and I’m going to grab my products and my internet sales so a much smaller number of tables in your data warehouse typically and it’s a little clearer kind of what I’m after.

31:42

Nonetheless though. I’m going to go ahead and I’m going to do some Transformations because I still have a snowflake product hierarchy and some of my customer information. I want to expand out to so bear with me while I kind of bang this out. So same sort of exercise where I go in this is power query and I’m going to use these table references to expand out.

32:08

When take out my French and Spanish Product category and so now I have my product hierarchy like that. So that was probably notice in power query to it keeps track of all of your steps here so I can sort of walk back in time and reorganize or change the settings for those if I haven’t done it correctly, which is really nice. I have a proper date Dimension.

32:32

So I don’t somebody’s already gone through that exercise of creating all those nice day calculations in their consistent with my organization and then on the customer Dimension, I am going to and this one out.

32:46

To include more of the Geo information and I’m going to get rid of my French and Spanish so that I can do you know that that map that I wanted to do but net of compared to the transactional system that I was reporting off of before there was a ton last modeling that took place in this particular example, so when I’m done with that, I’m going to then close and apply once again, and I’m going to be brought back into Power BI.

33:18

And now I’m ready to create my model, right? And so there’s already some primary foreign key relationships here. I have to re-establish these relationships because of because I change them in power query. So I’m going to go and relate buy my product key.

33:38

Check to make sure my relationships are okay my customer.

33:45

I am going to join.

34:01

My customer key.

34:36

Let’s see.

34:54

I’ll pull my finished one because I can’t seem to remember what that join was right now.

35:01

You can see none of that. There’s only a couple of joins that I have to do and I’m ready to do my analysis.

35:09

So soon as this comes up, I’ll build you the visualization and we will move on.

35:22

Be a live demo if we didn’t have some kind of snafu here so you can see here that I have my again my visualization that I’ve built using the product categories and I would jump back to my model here real quickly. So you can see my customer and geography dims my proper date dim and my product M. So it’s very easy for me to then go ahead and create this analysis and interact with it.

35:44

So a lot less work and reuse across the organization so jumping back to kind of Pros and cons of that the pros are that it’s pretty easy in because the data is designed for analysis. It’s also much more govern because to get into EDW. There’s a whole process that was gone through so that ETL that I showed you an SSI is it has been vetted and sanctioned and blessed by others in the organization. It’s generally performant. It also scales and you have great things like history Trends data capture and you get a higher level of reuse and ideally align business.

36:22

Metrics the cons of that are that there’s usually a penalty in terms of latency. You got to wait for the day to get there. It takes time for those ETL jobs to run. Sometimes the recency of the data the data may not be as fresh as with a real-time scenario.

36:38

There is some cost associated with building that it’s not as agile because if you have metrics or changes in the business or the business logic those have to be propagated from upstream and again, you might not have all of the data that you have in that you need for your analysis. Again. The nice thing about this is in this scenario. I could go back into that Power BI workbook that I could add another data source to it. So EDW use in all their various permutations are really ideal form or medium to large Enterprises and for more govern data needs and again, the methods are you can do direct query or you can do import or you can do leverage that composite dual model. So that’s kind of the intermediate.

37:22

Level now a permutation of that and that’s why I said was kind of four plus methods is that we see commonly used in this sort of a best practice is instead of a EDW is connecting to an SSA as tabular model now here instead of doing ETL you’re using visual studio to build a tabular model in SQL Server analysis Services, which is that columnar in memory store and then using Power BI desktop to connect to that.

37:51

So this instance what we’re going to do is I’m going to create a new one here, but I’m going to jump over to the internet sales here. And this is again in visual studio. So this is not necessarily for the faint of heart. This is not necessarily something that your analysts are going to do, but they’re pulling in tables from my adventure works data warehouse and pulling that in and defining KPIS and measures and then deploying that out to a SQL Server.

38:22

Osa services in memory store, so it’s highly performant and easy to analyze and I’ll show you what that looks like. So I’m going to go connect to that.

38:31

And this time for my database, I’m going to pick my SQL Server analysis Services option.

38:38

And I’m going to type in localhost again and you’ll see again. I have import but the second option isn’t direct query it’s connect live and that’s that live connection.

38:47

I was talking about the key difference being that when I pick my internet sales, for example it’s doing that live connection. Here’s all my dimensions and measures and I’m ready to go. You’ll notice I can’t do any modeling against this right? I can’t change anything. There’s no transforming that I can do I can edit the data source settings like point to the cube but that modeling option is gone. The data view option is gone. I only have the visualization capability. So it’s nice because I can, you know, go straight into actually creating the visualization.

39:28

However, I’m limited by what has been modeled in the SSAS tabular model.

39:35

So I’m just going to pull in some of this stuff too. Quick show you what this looks like.

39:46

Alright, so there’s my interactive amount now the numbers a little different because this has been pulled into a cube and it’s looking specifically at the internet sales, but I can do all the analysis that I wanted to do right I can get my geography and I can go to say country region name.

40:06

I’m putting my sales amounts and then you can see all my numbers here. So it is what it is right at this isn’t formatted correctly or I wanted to do this a little differently. There isn’t a lot I can do. I also cannot get data from this so I can’t go in add another data source this analysis. So this is it’s fast. It’s performant. It’s governed but you sacrifice a little Bit on the agility side. Now if I did an import from that into Power BI you could do some of that modeling and add other data sources.

40:35

It’s not generally recommended for various reasons. So the pros they’re very similar to EDW and that it’s easy. It’s designed for analysis governing performant. Its scales. You have can have things like your history and what not. The cons are again, very similar and you sacrifice a little Bit on the agility side. So if you’re trade-offs are more better governance and performance, which you sacrifice a little Bit of agility for again, ideally for medium to large Enterprises and govern data needs and the method is alive.

41:05

Action or you could do an import and the maturity level sort of an intermediate level. All right. So now you’ve created some workbooks you’ve connected to transactional sources. You’re a pro model or you’ve connected to EDW or maybe a SSAS Cube, but you’re still living in the file world and but you want to deploy this and get this out into your organization more. Well, the Next Step logical step here is what we call a shared data set. So now you’re going to be invoking and leveraging the Power BI service.

41:35

And a data set is really the way to think about the data set is a fully modeled subject area. That’s ready for reporting. Okay, and it’s so there’s it’s ready to go. It’s kept up on powerBI.com and it’s easy to leverage.

41:56

So what I want to do here and the easiest way to do this is just to use I want to use my ulti P1 here. And so I remember my first model against the oil TP system. And if I want to use this all I have to do and to create a data set is click the publish button and this is going to I’m already logged into powerBI.com and it’s going to publish it up to the service. It’s going to publish my content plus this data model and in the interest of time.

42:39

I’m actually going to jump up here, so you can see adventure works LTP finished in my data sets. And what I can do is I can either access this via powerBI.com so I can go up here. And this is this is the powerBI.com interface of the Power BI report creation interface on the web is a little tricky to grab this thing.

43:04

But I can go and create visualizations here using that data set on the web or I can go out and I can create.

43:16

Or I can access the content that was published when I when I went and publish this so I can either interact with this I can secure it. I can share it as a bunch of things I can do at this or I can leverage that data set so the data set there’s nothing I can do with it. I just connect to it and I’m off to the races now if you’re doing this from powerBI.com or sorry from Power BI desktop.

43:44

What you’re going to get is when you hit the get data button.

43:53

Is instead of picking a database or a flat file or whatever you’re going to pick the Power Platform option and you’re going to see a menu of options there.

44:10

So you can see Power BI data sets and data flows. I picked Power BI datasets because I’m logged in it doesn’t prompt me in stores. My credentials for me. It’s going to show me all the data sets. I have ready to use for analysis and you’ll notice the important thing about these is that you have the ability now to promote and or certify those and one of the big hole rise of these self-service tools as I mentioned in the beginning of the webinar kind of flipped the whole earlier.

44:39

This has driven centralized reporting on its head in that you had very governed metadata that was going out but it was in a limited fashion with Power BI with desktop and the use of data sets. Anybody could go and publish a data set to powerBI.com and there’s no knowing of that data set was any good. So in an attempt to introduce some level of governance here, they introduced the certified and promoted and it’s as simple as identifying data stewards in your organization your Power

45:09

Pi admins defined who has the ability to put these badges on so not just anybody can do it. And then really all you’re doing is sharing that set and there’s an endorsement area here where I can say. Hey this is promoted and this is certified and when I do that if I do that those bubble up to the top and I know that if it’s certified that this is something that’s really sanctioned by the organization. I can trust it and I can go ahead and build content off of it if it’s promoted. Maybe I’m looking at the owner and I’m sort of saying, okay.

45:36

Well they’ve at least taken some care to maybe vet the numbers and make sure that they I know but it’s not quite the same level. So there’s kind of a three level and then this you kind of get what you get and you taking your chances. So that’s what that looks like from a data set perspective. Now the pros and cons of that are that they’re nicely governed right there. They’re good to go. They’re easy to use their certifiable and promotable. They should perform again. Those datasets can connect live to underlying data sources or do Imports.

46:08

The Imports are obviously going to have probably more reliable performance because otherwise you’re depending on the underlying data source, you get a high level of reuse you get a if they’re governed properly you get a reduce impact on those sources because imagine if you have all these people creating data sets or workbooks against transactional sources and running queries and imports. It can really highly impact those sources and you have a better chance of having a line business metrics when people leverage the same data set and the cons are really there aren’t a lot of cons here except for you.

46:39

Absolutely need to govern these because if you’re pushing this out to Power BI Premium, Power BI Pro and leveraging the service and you just sort of let these data sources datasets propagate you can end up with some, you know for modeling and poor performance and the Wild West if you will so but this is highly recommended best practice in the organization assuming you do and use proper governance. So it’s ideal for sharing data across similar reporting use cases, right? So think about it.

47:09

As the sales analysis that I’m doing what I want to look at my customers and my sales amounts and things like that. It also uses that live connectivity. So when I connect to it, it’s not very agile. I can’t add other sources to it. So it kind of is what it is. And when you’re here, you’re kind of on the intermediate level of the BI maturity curve.

47:29

Alright, so the last option here option for is data flows. Now, we’re moving even a little more upstream in the Power BI service and the way to think about data flows from a definitional perspective is reusable data objects in Microsoft parlance are called entities. You can really think of them as a as an object and the most obvious one is something like time and I’m going to show you how this works drilling into that a little bit more you’re connecting to a data source or sources.

47:59

You’re on powerBI.com. So you’re doing this via the web and you’re leveraging power query on the web and it’s it is always going to pull the data. So think of it like a bit of an ETL job. It’s going to pull the data based upon the model that you build and land it or transform it rather and landed in Azure data lake gen2 storage from which you can connect to it via our BI desktop. You can create a data set out of it. There’s a bunch of things you can do it do with it. So to show you what that looks like.

48:29

I’m going to back here. I’m on powerBI.com and I want to I’ve got some data flows up here. Right? So I have for example my adventure works where I’ve put up a products entity in a customer entity and I can use those in reporting now. I’ve also put up a date to mention up here, but I want to show you what that looks like. So I’m going to create a data flow and I’m going to define new entities and in order to do that.

48:59

That I’m going to use the Time Dimension that’s in my data warehouse, right because I’ve modeled this carefully.

49:13

And I want to use it across my organization across different reporting needs because every reporting scenario pretty much needs a Time Dimension and it has some intelligence built into it. So it knows it when I type in my database right? It’d be nice if it actually a little drop down here. It knows that my on premise data gateway detects that this is a data source that I’ve already defined on powerBI.com. So I’ve defined this already and from there. This is going to look and behave almost exactly like Power BI desktop, so it’s connected to the data warehouse.

49:42

So you see that looks exactly like it did and I’m just going to pull in my deep dimension.

49:49

And then the only thing I really need want to do here just to show you what that looks like. I’m going to pick the table or tables that I want. I’m just going to pick the one and I’m going to pull that straight in because it’s pretty much exactly how I want it and now you’ll see this looks pretty much exactly like your desktop so I can do all those great Transformations. The only thing that I want to do here is I’m going to change my data type on this one to a date time.

50:15

And again, it keeps track of all my steps for me and I’m going to go ahead and save and close this and it’s going to go and pull that into powerBI.com. It’s going to ask me for a name.

50:29

And in the interest of saving time here, I’m going to jump back over to my initial OLAP model, right? Remember I had done all this modeling and it’s against the transactional system. I didn’t have a proper date dimension. Well a good way to make this model a little more high quality would be to add a date dimension and a great way to do that is to leverage a data flow. So I’m going to go up. I’m going to hit get data. I’m going to go to Power Platform. I’m going to pick a Power BI

50:59

data flow I’m signed in to powerBI.com. So it’s going up to powerBI.com. It’s getting me the structure and I can go click on the workspace that I’ve been operating in. Here’s my demo date or my dim date and I can pull that into my visualization. I’m going to use the one that I published already.

51:17

And I’m just going to go ahead and load that guy.

51:27

It’s going to give me a warning.

51:31

Or not and I’m going to use my dick key and I’m going to join that thing up to.

51:38

Ship date or rather, I mean he’s full alternate key, right so you can see that those date formats lineup. I’m going to say OK create my relationship and now I can go back to my model and instead of using my calculated Fields here that I generated. I can actually alter these and I can use my sanctioned.

52:05

Dates upset forgot to do one thing. So you notice here that I put some their sums next to all these one of the great things is that it’s really easy to iterate through your model here. And so one of the things I can do is Power BI wants to summarize all of these different fields and I don’t want it to so I can go ahead and say guess what?

52:27

I don’t want you to summarize this flip back and that’s something that in the old model could potentially have taken weeks right to make that happen, but I can iterate quickly through this and then I’m able to use that hierarchy and use my official time dimension in this model and I could publish this this can become a data set so you can sort of see the inner relationship now between modeling something against one system publishing that up to powerBI.com to leverage a consistent data set across the organization certifying and promoting those and then pulling in things like data flows to give you nice reusable entities that give you more consistent reporting and enable reuse and all the and governance and provide governance in the organization. So the pros of that are really you don’t have to everybody doesn’t have to go through the exercise of doing all those Transformations and calculations. They can reuse these objects across different reporting scenarios. You get a higher level of governance. Ideally performance is optimized and it gives you a certain degree of agility.

53:30

The cons are potentially recency and data duplication because you are pulling that data in and putting it into Azure data lake to into storage. And so it’s really ideal for organizations who are really looking to move from the desktop and scale Power BI to the cloud into the enterprise and for governed use cases. The method is only import. It’s going to always pull that data into Azure data lake into storage and then your connection to that, you know is what it is and you’re BI maturity level.

54:00

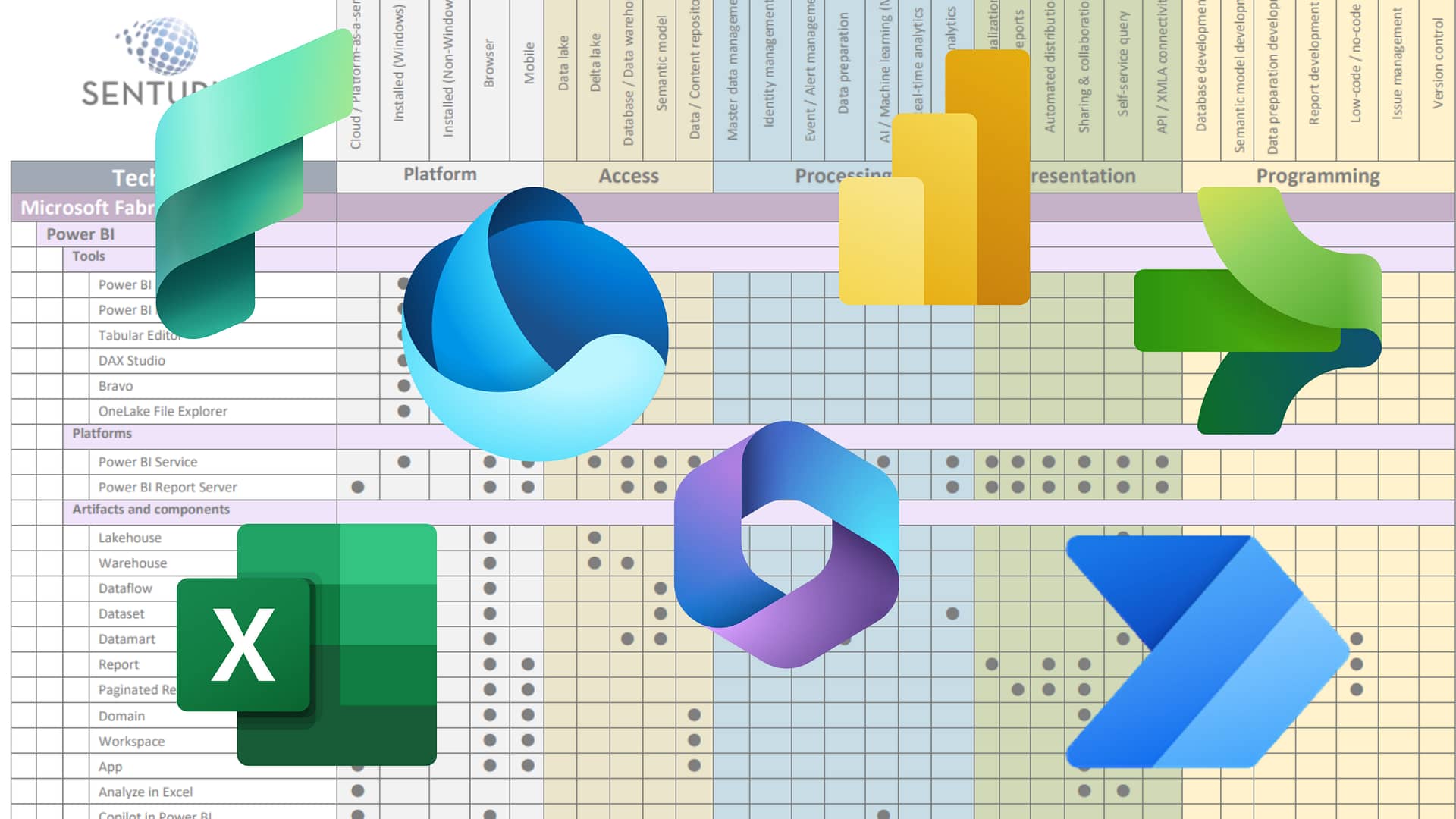

Congratulations if you there you’ve reached the level of pro. Alright, so those are all of the four methods that I have for you today in the interest of giving you something to kind of take home and chew on here or perhaps if you’d like to start a conversation with us about this as we attempted a summary comparison Matrix where we look at all the various types of connectivity and then the sub-query methods so I demonstrated transactional eat.

54:31

DW SSAS data sets and data flows. I put in we put in another two rows here for ODS or data lake that sort of sitting in between those two scenarios. And then the query methods under those we identified where the model resides so if it’s in Power BI desktop or an SSAS or the service and then you can analyze some of the you can take a quick look at some of the trade-offs here whether you’re getting good performance. Where is your cost? How recent is your data? etc.

55:01

So hopefully this helps you sort of identify. What scenario is best for you? Keep in mind your mileage may vary we do have to make some assumptions in here based upon we can’t take into consideration every possible scenario or permutation and then the last slide here. I’ll let you Analyze That offline because I have time to go through every single.

55:25

One of them is we try to summarize sort of the best practices and you use case by ouch, so and they do follow roughly in the order that I presented the information. So from sort of your least best practices a loaded term you’re least desirable best practice. Is that a is that a way to phrase it all the way up to kind of your best practice, but you have these use cases right where you’re going to want to do direct.

55:54

So if you don’t have budget you need real-time and the director is the way the way to go if you want to reduce the impact and maybe import or Is it method using the direct connection to the transactional system? So again sort of roughly follows the flow of the presentation that I did here today and goes from you know, worst to best so which is best for you. Well, the answer is really, you know, it’s summer all of the above and it really depends on the size of your organization. The use cases and is a balance of all those factors that I talked to front and this is an iterative process. It will morph over time.

56:30

You’ll do your typing your quick and dirty test case with your analyst on desktop as a direct connection or as an import and then as you want to harden that and scale that to the organization you’re going to move into one of the other options and that cycle, you know as those of those of you even been in BI for a long time, you know that this is like an ERP upgrade or something where you do it and you’re done good be I continuously evolves and continuously seeks to answer more and more complex questions.

56:59

And those are the kind of things that we help at Senturus with all the time. So hopefully you found that interesting. I’m going to go through a couple of quick slides here on Senturus, please stick around because I do have some great free resources and we have the Q&A at the end that will get to real quickly here. But if you need help with Power BI as you can see, it’s a complex tool both in terms of the desktop and the Power BI service whether you’re trying to get into Power BI premium or you’re trying to go from the desktop the enterprise you need training, whatever it is. We can help you with a mentoring session.

57:31

To help speed up your dashboard creation reuse data sets share dashboards across departments. You can learn more at the link below that’s in the deck of a couple of quick words about centered about Senturus. We are the authority and business intelligence. All we do is BI all day every day and we’re unrivaled in its expertise across the BI stack our clients know us for providing clarity from the chaos of complex business requirements myriad ever-changing data sources.

57:59

And constantly moving targets and regulatory environments and business requirements. We made a name for ourselves because of our strength at Bridging the Gap between it and business users. We deliver solutions that give you access to reliable analysis ready data across your organization. So you can quickly and easily get answers at the point of impact the form of the decisions you make and the actions you take we offer a full spectrum of BI Services ranging from building dashboards reports and visualizations to helping you with data prep modern data warehousing.

58:29

Migrating BI systems, whether it’s upgrades performance optimizations migrations from one BI system to another migration of your data warehouse. We even offer software to enable by mobile BI and platform migrations and little array of training and mentoring or expertise on demand. Our Consultants are leading experts in the field of analytics and they have years of pragmatic real-world expertise and experience advancing the state of the art fact. We’re so confident our team and our methodology that we back our projects with a 100% money-back guarantee.

58:59

See that’s unique in the industry.

59:02

We’ve been doing this for a while almost two decades. We work across the spectrum from Fortune 500 to mid-market solving business problems across many Industries and functional areas, including the office of finance, sales and marketing manufacturing operations. HR and IT. Our team is large enough to meet all of your business analytics needs yet small enough to provide personal attention.

59:23

We have hundreds of free resources at Senturus.com/resources. So again this is where you can find the deck and the recording head over there and there’s all kinds of great stuff including past webinars our blog a lot of great stuff upcoming events. These are all free definitely go check them out on our events page on our site really excited about this one complex aggregation engines in Tableau. We’re talking about table calculation and level of detail calculations on Thursday, March 26th.

59:51

You can go sign up on our site, we’ll also have a webinar on what’s new in Cognos 11.1.6 in about three weeks after that and then we’ll be doing a webinar on Tableau Prep a week after that one. So definitely come join us for that. And then lastly I’d be remiss if I didn’t mention our great training offerings whether it’s for Cognos, Power BI or Tableau, we offer a full spectrum of training across different modalities, whether it’s tailored group in-person sessions, instructor-led online courses, self-paced e-learning or in-person mentoring. That is so critical.

1:00:23

Cool with tools like Power BI and then lastly all of our additional resources here that again you can find on our resources page. We’ve been committed to sharing our BI expertise for almost two decades.

1:00:38

We’re at the top of the hour. And I know we have some questions. We have a slew of questions. We have a slew of questions. All right, you want to hit me with a few of them? Yeah, let me try to prioritize and and what I will say for everyone is that if we don’t get through all the questions, we will definitely post a questions log you with the answers on our website at the same link. I provided in the chat window there.

1:01:07

All right. Let’s see. So, can you okay if you import using an Excel spreadsheet, does it Auto map the columns?

1:01:20

Does it Auto map the columns so what it does is it will pull that in it and it tries to read the data format. So if that’s what you talking about, yeah, then it’ll pull up like if it sees a date field it should come across as a date field. It’s a function of the quality of your data in an Excel.

1:01:38

Okay, one of the questions was how aware to specify the composite type query and that we might need to take offline probably to walk through right? That’s actually pretty straightforward. Right? If you’re in your model, you can click any given object. So first of all, when you when you do the get data, you saw the I have those radio buttons for import or whatever, but that sort of defines it for that entire query, but I can set it up right here so I can change for example, my sales orders to be an import and I define it right here in the model.

1:02:08

So I select the storage mode. You see the import direct query or dual. There’s definitely some caveats around that and it will behave differently based upon what you do, but that’s where the setting is. Okay, and in Power BI the prepare data function, is that considered to be ETL like tool?

1:02:32

Yes so that when you say Power BI prepare data capability, so the way they frame it is you do the get data and if I want to further model the data, which I generally do unless it’s I go into the transformed data hit the transformed data button. And by the way, this is a new UI that they literally just kind of came out with yesterday. It’s been in preview since December, but now it’s the default as of last night.

1:02:59

So when I go in here, this gives me that transform capability now, it’s no different than the Transforms that you might do in an ETL tool, but if I’m doing direct query, I’m not actually pulling that data and Landing it in to Power BI right. I’m issuing actual queries back to that system. This is a view of the data. So it only becomes I would say quasi ETL if I do an import then it’s going to pull the data and land it in a tabular model here in the in the Power BI applications.

1:03:38

Action, so hopefully that clears that up.

1:03:42

And there’s one question. Can you turn off the import function for analysis Services cubes to prevent users from manipulating a governed data source, quote unquote govern, you know, that’s a good question. I’m not aware of that capability. I don’t know that you can but I’d have to have to have to research that. Yep, Q&A and then well, this is very specific.

1:04:10

That’s sufficient as a semantic layer to your data as compared to something like Cognos Framework Manager. It’s pretty specific comparison to the Cognos platform. So it’s I would say it’s similar to it. Yeah Framework Manager model allows you to connect to your data and model it. Cognos is heavy on modeling you’re able to model the data, right? You’re able to kind of model this star schema there isn’t a lot of transform.

1:04:40

These data modules are introducing some transform capabilities which are different than the modeling capabilities, but you can’t do like in framework manager. You couldn’t do the fill down, right and a lot of the like the data pivoting you couldn’t do that stuff.

1:04:55

So there’s a lot of transform capabilities that are here that don’t exist in something like framework manager, but the idea that you’re seeking to get to this virtual or Star schema for reporting purposes is the same so they have roughly the same intent, but framework manager being 20 plus year old tool is comes from an age when they kind of assume that you were going to do more of that that transformation kind of upstream in the in the data sources if you will and where you are able to cube there’s a question if you can the data flow import Power BI data data sets and I think the answers absolutely.

1:05:35

Yes, so a data flow doesn’t in a data set a data set can be created off of a data flow. So the first okay. Remember my I know it’s I get confused with the two I have to think about it sometimes so in this case like this dim date is used from comes from the data flow and notice. It’s always going to be an import right because it’s coming from because it’s coming from that data flow. I could now publish this up to Power BI and it would create a data set. So it’s actually I did put up.

1:06:10

Up here. I put it up here. So now I have a data set that includes the date, time, right?

1:06:17

So now I go up here and I can use that so my data flows can’t really leverage data sets, but you can go the other way around. So when I create a new entity, I don’t really have an option here to pick a data set or you know other Power BI Platform. Now there might be a way to trick it. I know there’s certain things here that don’t flow back and forth quite as cleanly like when you’re that and all this you can’t do all the stuff that you can necessarily do in desktop, right?

1:06:50

So you might end up taking each one of these steps writes an M query which is their language and I can take that if I can’t do it in powerBI.com Always copy and paste the M query or SQL up there to accomplish kind of what I want to but anyway, that’s kind of long answer to your question. But the Box are kind of easily. No, you can’t do that. Okay, we’re almost 10 after so I think I’m going to propose that we take everyone’s questions. We do have a slew more, you know several more that we can address and we’ll post them publish them up to our website using the same link that I include you in the chat and we’ll take it from there. Sounds good. Yeah.

1:07:27

Thank you everybody for your time and attention today. Appreciate you joining us. Stay safe out there. Stay healthy keep watching those hands and we look forward to seeing you on the next senturus.

1:07:40

education event. Have a great rest of your day.

1:07:43

Thanks, everyone.