Having Cognos operate independently from Power BI or Tableau defeats the goal of modern BI: fast, unified decision making based on secure, accurate data. The lack of integration is costing companies time-to-action and competitiveness.

Watch this on-demand webinar to learn about the Senturus Analytics Connector, a simple drag-and-drop solution for integrating these distinct tools. You’ll see how easily the Analytics Connector allows Power BI and Tableau to tap the secure, curated data in Cognos. This powerful BI tool pairing is one both IT and business users can appreciate.

Through demo and discussion, you will learn about

- Drag-and-drop functionality that eliminates time-consuming data remodeling efforts

- Improvements to security, data accuracy and overall Power BI/Tableau performance

- Features that reduce query waiting times and boost performance

Presenters

Arturo Ayala

Lead Developer, Senturus Analytics Connector and Migration Accelerators

Senturus, Inc.

Arturo is the lead software developer for the Senturus Analytics Connector and the Migration Assistant. Along with a decade of experience in software development, he brings a love of data and complex structures to his role. Arturo ensures that the Senturus migration accelerator tools evolve to meet client and market requirements for streamlining Power BI migrations and leveraging data in a hybrid BI environment. Fluent in Cognos, Power BI and Tableau, he is also experienced in multiple data, cloud and full stack technologies including Angular, Java, .NET and Node.js.

Steve Reed-Pittman

Director of Enterprise Architecture and Engineering

Senturus, Inc.

Steve’s career in data and analytics spans 20 years and includes clients from the Fortune 500 to the Inc. 5000. He is adept across many facets of analytics including data architecture in physical, virtual and cloud environments. Steve transitions easily from the server room to the boardroom, speaking the language and understanding the priorities, risks and complexity that accompany the selection, implementation and operation of technology in fast-moving enterprises.

Read moreMachine transcript

0:11

Hello, everyone, and welcome to today’s Senturus webinar on dragging and dropping Cognos data into Power BI or Tableau.

0:21

Thank you for joining us today.

0:22

Great to have everybody here a quick overview of our agenda for today.

0:27

We’re going to start with introductions, quick introductions.

0:30

We’ll introduce our main presenter.

0:33

We’ll do a quick overview of the Senturus Analytics Connector, which allows you to connect Tableau and Power BI directly to Cognos curated data.

0:42

We’ll do a demo of the connector with both tools.

0:45

We’ll go through some frequently asked questions, quick overview of Senturus as a company, what we do, some additional resources we have to offer.

0:53

And as I said before, we’ll do some Q&A at the end.

0:58

So before we kick off all the details, quick introductions.

1:02

Our presenter today is going to be Arturo Iola.

1:05

Arturo is our lead developer for the analytics connector among other software tools here at Senturus.

1:11

And Arturo is going to be driving things today and showing off the connector to you.

1:16

I’m Steve Reed Tippmann, Director of Enterprise Architecture and Engineering.

1:20

I’m here to be sort of the intros and the outros, but our but Arturo is going to really drive most of the show.

1:28

Before I turn it over to Arturo, I would like to do a couple of quick polls.

1:31

We just want to get a sense of kind of where everybody is in terms of what platforms you’re using, kind of what your plans are.

1:38

So poll number one is which platform or platforms are you currently using in your organization.

1:46

So let me kick this one off.

1:48

Get that going.

1:51

And so presumably everybody on this session is at least using Cognos.

1:57

But it’s always interesting to know whether or more folks are moving towards Power BI, more working in the Tableau world.

2:03

And we also encounter folks who are who are using everything.

2:06

So it’s always interesting to find out.

2:09

I’m just looking at some of the results as they come in here.

2:12

And of course, almost everybody is answering Cognos, share those results out.

2:17

So yeah, and of course, almost all of you are using Cognos.

2:21

A little over half of you are on Tower BI, so actually a pretty good split here between Power BI and Tableau.

2:28

So we’ll be showing you how to use both of those tools with your Cognos data here today.

2:35

Alright, let’s do just one more poll before we jump into our Turos coverage.

2:42

So the second poll is what are your future plans with Cognos?

2:45

Are you planning to keep Cognos for the long term?

2:48

Are you keeping it for the short term but not sure what’s going to happen later?

2:53

Are you eventually going to migrate?

2:55

So let me start this one up.

2:59

So I’m going to go ahead and close this out and share those results.

3:04

So again, about half of you keeping Cognos long term, about 1/4 of you.

3:09

Cognos for sure in the short term, not so sure in the long term, but only about 14% of you who plan to migrate off cutting this completely and 10% of you actually are in that process right now.

3:22

That’s pretty consistent.

3:23

We see that with a lot of our customers, a fairly small percentage, but there are folks out there who are actively moving to a new platform.

3:36

All right, so with that, I’m going to stop talking and turn it over to Arturo to drive the rest of the show.

3:45

Thanks, Steve.

3:46

Hopefully everyone can hear me.

3:48

Hey everyone, my name is Arturo.

3:49

I’m happy to be here to discuss the Analytics Connector and its role in your BI efforts.

3:55

I want to start by spending some time looking at the inner workings of Cognos.

3:59

Now, this is a somewhat oversimplified diagram, but it’s going to help us identify the pinpoint of getting data out of Cognos.

4:07

On the left, we have our data sources.

4:09

This is usually a Sequel server or an Oracle server, but it all depends on what technologies you’ve adopted, right?

4:16

We’re going to call this our hard data, and our hard data exist apart from Cognos, but it’s completely necessary in a Cognos setup.

4:23

Over to the right, we have our FM models, cubes, or data modules.

4:27

And these entities are based on our hard data and really extend our hard data, and they eventually become what Cognos calls a package, right?

4:36

And a package is just an extra layer of logic on top of our hard data.

4:41

And we can refer to it as our metadata layer.

4:43

And this metadata layer can be as simple or complex as we want it to be.

4:48

And disclaimer, it’s usually pretty complex and all the way to the right, we have our reports, right?

4:56

And it’s that metadata layer, these packages that we use to create our reports and dashboards.

5:03

Now our package or metadata layer only lives in Cognos.

5:07

And on the surface, it’s not really an issue.

5:15

The moment we want to use Power BI or Tableau, it does become a problem.

5:19

Now there’s different reasons why an organization would want to use these tools.

5:23

Some may need specific functionality they can’t get from Cognos, some may want to drop Cognos altogether, and others may just want to leverage their existing licensing or expertise in one of these tools.

5:34

Whatever the reason may be, the fact that our metadata layer is stuck in Cognos really holds us back.

5:43

I think everyone can agree they’ve invested a lot into their Cognos models.

5:47

This is a screenshot of Framework Manager where our models and meta data are created.

5:52

You or someone in your organization has spent a lot of time here, I guarantee it.

5:56

The job takes a deep knowledge of the data and lots of validation to make sure the models are up to par.

6:02

After all, reports are based on this data, and your business decisions are based on those reports.

6:10

I mentioned how the metadata layer can be as simple or complex as we need it to be.

6:14

Here’s some more screenshots of Framework Manager.

6:17

There’s a lot going on.

6:18

Relationships are being made, new calculations are being added, points of data are being transformed into something meaningful.

6:25

And this is the data you want to work with when building your reports and dashboards, no matter what tool you use.

6:32

But it’s the same data that proves elusive in Power BI and Tableau.

6:37

And the reason is because Cognos has a firm hold of it.

6:40

The metadata layer that transforms our hard data belongs to Cognos.

6:44

If we want it in any other tool, you basically have to recreate it.

6:47

And that comes with its own challenges.

6:50

First, it’s a big lift.

6:51

The more complex your data model, the bigger the effort to recreate it.

6:55

And if you do end up recreating it, you now have two sources of truth that need maintaining, and that’s not desirable.

7:00

You just want a single source of truth.

7:03

And all of this takes time and money and you may not be in a position to offer either.

7:08

Cognos has built in security that maybe makes you compliant with a security standard and all that’s out the window when you’re looking outside of Cognos.

7:17

Or you basically have to recreate it.

7:19

Thankfully, curious and tourists, we’ve done something about this problem and I introduce to you the Analytics Connector.

7:26

The connector is a great solution for those that are exploring new tools and may want to coexist with Cognos and Tableau or Power BI.

7:33

It basically acts as a bridge from your existing models in Cognos to Power BI or Tableau, so you can leverage the data you trust and the tool you want.

7:42

Setup and config is quick and you can start building reports in no time.

7:47

On top of that, any security you’ve built in is still enforced and auto trails continue to be kept.

7:53

So we’ll move on here.

7:55

So by now we have a clear understanding of our problem.

7:59

We need our Cognos data in a different tool, and there’s different approach approaches to solving the problem.

8:05

Here are two popular approaches.

8:08

One could be to simply access the databases driving your models in Cognos via your favorite BI tool.

8:14

You know, accessing that hard data, right?

8:17

The problem with this is that any logic you built in Cognos is disregarded.

8:22

You would have to recreate some of that logic or metadata to make this a viable option.

8:28

Another could be to use Cognos as of a sort of ETL tool and extract any needed information via CSV dump and in the form of a report.

8:42

And an interesting point is that we found that some clients top five reports are for this sole purpose.

8:47

Now in my opinion, this is probably the best strategy because you get that metadata layer included in your data, but it’s hard to automate.

8:58

You know, you can automate the CSV dump generation, but you have to manually upload it to your tool choice.

9:06

And usually you also violate security measures because the CSV dump is done in the context of the user running it.

9:13

So let’s say, you know, further down the line another user comes and access to that CSV file, they will probably see data that they don’t have permission to, right?

9:23

And that of course, is something that we don’t want.

9:29

But really the thing with these two approaches is that we’re circumventing the metadata layer one way or another.

9:37

Now you can recreate that metadata layer, but why should you?

9:42

Well, it may be your only option in some scenarios.

9:45

You generally don’t want to go down this route.

9:48

For one, it compromises data integrity.

9:50

These remodeling efforts are a big lift and the bigger they are, the more error prone they are because of everything that’s involved and also compromises data security.

9:59

And any security you built in via Cognos authorization is no longer counted on and it’s time consuming.

10:06

You’re essentially reinventing the wheel in another technology.

10:11

And lastly, creates data silos.

10:12

You know, one user group may have the right data and another has a completely different set of data and they may reach different conclusions.

10:20

And of course, that’s a problem, right?

10:24

So the question becomes, how do we do it?

10:26

How do we access our Cognos data in Power BI or Tableau?

10:31

And that’s where the connector comes in.

10:33

Cognos is our source of truth.

10:35

So let’s keep it that way.

10:37

And it’s something that can be achieved with the connector user can access their data in a way similar to that of Report Studio and Cognos Analytics, the leveraging their favorite BI tool and the expertise they have in that ecosystem.

10:49

So this screenshot here contains both Cognos Report Studio on the left and Tableau on the right.

10:57

In the Report Studio, you can see that we have our Go sales query packet selected and you see how it breaks down.

11:03

And Tableau, you can see how we have the same package selected and how the items in the table section match what you see.

11:08

In Report Studio, we see how our packages, query subjects, query items, measures, and dimensions become databases, tables and columns and Tableau.

11:19

And I’ll talk about that conversion a little bit more during the demo.

11:24

You know, it’s really easy to see your data the way you expected, and all the effort that went into your Cognos models is persisted across these reporting tools.

11:33

Basically, all the hard work that you did in Cognos isn’t lost when using the Analytics connector.

11:38

With the connector serving as your bridge to Cognos, you can start adopting the reporting tools you want with the data models you need.

11:45

Before I get into the demo, I want to recap the important points of using the connector.

11:50

For one, you don’t have to remodel your data.

11:52

You get to leverage your existing models and because of that, you can be confident the data you’re dealing with is accurate.

11:59

You can keep depending on your security measures because they’re persist, persisted in whatever tool you use.

12:04

And like I mentioned earlier, installing and configuring the connector is quick.

12:08

You can start building reports that you trust right away.

12:13

And lastly, you can ensure your user base is accessing one single source of truth, and that’s always a good thing.

12:19

And one more point before we get into the demo, I just want to mention that you can publish your reports and data sets from Tableau or Power BI up to Tableau Server and the Power BI servers to share across your organization.

12:35

Using the connector, you can create a data set that originated from Cognos and using Tableau Server and the Power BI service, you can expose that same data set to the rest of your team for their own reporting purposes.

12:47

Now we talked about not sacrificing integrity and security for agility in the last slide.

12:52

The fact that you can publish these datasets is huge.

12:55

It gives your users instant access to your Cognos data in an environment they’re accustomed to.

13:02

So with that said, we’re ready to get into the demo.

13:06

And I’m going to go into my desktop here and we’re going to start off with the Power BI.

13:14

So I’m just going to create a somewhat simple report leveraging one of our Cognos packages.

13:24

So the connector in person makes a Sequel server just for the sake of compatibility.

13:29

So that’s why I’m clicking on this Sequel server connector.

13:33

And we have the connector running on a server called Internal BI One.

13:39

I’m going to select Direct query from our connectivity mode.

13:42

We also support import mode, but Direct query works a little bit faster just because it doesn’t have to pull in all the data.

13:48

So let me click OK here and we have to log in.

13:55

So you use the same credentials that you use in Cognos Analytics when logging in and that depends on whatever, you know, security namespace you have set up here.

14:09

I just want to make a point that I’m using the Cognos embed user.

14:14

A little later I’m going to use a different user to showcase roll level security.

14:20

Let me see if I remember the password here.

14:28

OK, here and all right, so we’re connecting, and here you see a list of packages, right?

14:39

So here I’m going to switch from Cognos jargon over to database jargon because our package really becomes a database.

14:48

So I’m going to select the sales query package here.

14:52

And this is a subset of the Go Outdoor Sales sample package that comes bundled in your Cognos installation, so it may look a little familiar.

15:02

So here we have a list of what in Cognos are known as query subjects, right?

15:07

Over here in Power BI and Tableau, they’re just seen as tables, database tables.

15:12

So I’m going to select a few of these.

15:15

We’re going to start with branch order method product cells, and we’ll get some date information in this timetable.

15:22

We’re going to load these up and what Power BI is doing right now, it’s requesting meta data about these query subjects or, or tables.

15:33

So the connector is returning what columns or what query items belongs to each table.

15:40

And we see the, we see this over here on in the data pane.

15:46

And before we start creating our report, there is a modelling exercise that we have to do.

15:53

Now you may be asking yourself, well, here we’re, we’re going to create relationships between these different tables.

15:59

And you may be ask be telling yourself that, hey, we already built these relationships in framework management.

16:04

Why do we have to do it again?

16:05

And we’re not really recreating them.

16:08

We’re just giving Power BIA little help because Power BI needs to know how these tables relate to each other to formulate the queries when we drop a visualization in.

16:17

So you’ll notice that each of these tables have this a link column at the very top.

16:23

This is a dummy column that’s injected by the connector and it’s just start to facilitate relationships.

16:30

So here I have my sales table in the middle, which is our fact table, and we have our dimensions side.

16:37

And I’m going to create relationships from ourselves, from our fact table to our dimensions.

16:43

I’m going to do many to one here for all of these tables.

16:47

And by the time we’re done, we’re going to have like a little nice snowflake looking thing.

16:53

And for anyone familiar with modelling, that’s usually what we want to go for.

17:04

Now I do want to make a point that this relationship building exercise is can really be a one time thing.

17:12

If you leverage the Power BI service or tablet server, you can publish this model and Power BI, it would become your semantic data model, and then you can share that with everyone and they can use that model without having to go through this relationship building.

17:30

So now that we have this done, we can actually start creating our report.

17:34

So we just have a lot of sales information.

17:36

So let’s try to visualize all that on a map here.

17:43

So I’m going to add a map and I’m going to add country here.

17:53

So we have all the countries that we have data points for here in this little bubble.

17:57

And we’re going to give some meaning to that bubble and we’re going to add in revenue here.

18:06

So like if we take the US here and actually let me format this really quickly so it’s a little easier to see, but we can see that the US has, you know, $533 million of revenue.

18:22

And what I’d like to do here is to make sure that our data is, is accurate, right?

18:26

Is create a simple report and Cognos and just compare the numbers.

18:32

So I have that ready here.

18:35

And so let’s compare.

18:37

So United States $533,803,961.85 so you can see that it matches the Cognos report and that’s what we want to see and that’s what we expect, right.

18:53

Let’s continue building our report here.

18:56

And I’m going to showcase this bit of really it’s Power BI functionalities, not Cognos specific or connector analytics connector specific, but I’m, I want to do it just so you can see how the connector just works seamlessly with Power BI.

19:12

So I’m going to create a hierarchy and we’re going to start off with this product line.

19:17

And a hierarchy is really just a way to organize our data.

19:22

So you can think of it as having like a parent level and children beneath it.

19:28

And let me rename this to product hierarchy.

19:34

So we have our product line and then I’m going to add product type.

19:39

And then the last level is going to be this product here.

19:46

And the matrix in Power BI is a great way to visualize these product hierarchies here.

19:53

So you can see how we have these different product lines.

19:57

And as I expand them, there’s, you know, items beneath it.

20:01

So we want to see sales free to these products.

20:04

So let me minimize this and add revenue and let’s add plan revenue too.

20:14

And let me format this plan revenue just so it’s a little easier to see.

20:22

And this revenue, let’s change the decimal points here just because it looks weird having so many decimal points.

20:31

Alright, So what we want to do here is oh plan revenue that did not change currency is what I meant to select.

20:43

Alright, perfect.

20:45

And let’s change decimal point.

20:49

OK, so here one thing that our again we want to validate our data, right?

20:54

So I have the total amount of revenue down here in our Cognos report and you can see that it matches you know to the stent, which is again what we want and what we expect, right.

21:08

And then again, if we break down some of these revenues by product line, you can see like example personal accessories, you got a million, 885,000,601 billion, I should say $885,673,307.78 matches perfect.

21:30

And let’s continue building our report.

21:34

At this point, I’m just showing off what the connector can do.

21:37

But let’s add a line chart here and let’s breakdown sales by order method.

21:45

So we’re going to do, we’re going to add in some time, some data information here.

21:54

And we’ll do, instead of cells, we’ll do quantity units sold.

22:01

And we’ll add our order method type here.

22:06

So here we have a breakdown of units sold by different order method types based on an annual basis.

22:17

So let’s choose a random data point here.

22:19

Let’s choose this yellow line, which is the web order method.

22:23

And let me format this just a little.

22:26

There’s some columns here, so it’s a little easier to read, but you can see and actually let me pull up our Cognos data here just to validate.

22:39

So for web and 2012, get this right.

22:45

You can see that we have 23,054,131 units here.

22:51

So again, it matches what we see in Cognos and that’s exactly what we want.

22:57

So here you can see how easy it is to build a report in power.

23:00

We are.

23:00

You don’t even know that you’re using the connector, which is kind of what we want, right?

23:05

We just expect our data to be right.

23:06

And given the validation we did, it looks good.

23:10

Now I want to showcase how we can also use the Power BI service.

23:14

So I’m going to publish this, yes, I want to save my changes, and right?

23:24

And I’m going to publish it to my workspace.

23:28

So this will take a few seconds.

23:33

The caveat here is that you do have to have the Power BI Gateway installed.

23:38

It’s a rather simple setup and configuration, but that does need to be in place in order for the Power BI service to communicate with the Analytics connector.

23:48

Right, So it’s been published.

23:50

Let’s go ahead and open our demo here and I’ll maximize this window.

23:54

But once this loads up, we should see the same report in the Power BI service.

23:59

And the benefit of this is that now you can share this report with someone else in your organization.

24:05

So someone wants to know how much camping equipment was sold.

24:09

This is the report for them.

24:11

And you can see that it’s as interactive as it is on the as in Power BI desktop.

24:20

And one thing that I want to point out here is that when you publish your report, wrong button, there’s also a semantic model that’s published with it.

24:31

So if I open up this model, I can actually start creating a new report.

24:37

And I didn’t have to, you know, go through that modeling exercise that I did at the beginning.

24:42

So if I bring in revenue, let’s make this a number.

24:49

This also matches what we saw in Cognos, which is exactly what we want.

24:52

So here we’re seeing that we created that semantic modeling Power BI desktop, published it, and it’s ready for everyone else to use.

25:00

That’s pretty cool, right?

25:02

So this concludes the Power BI version of the demo.

25:06

Let’s move over to Tableau and do the same thing.

25:11

So minimize Power BI here and start up Tableau.

25:17

And I’m going to select the sequel server connection here, connected to the same server.

25:23

And I just want to point out that instead of using the Cognos embed account, I’m going to use the Cognos embed 2 account.

25:32

And hopefully I remember the password.

25:38

And I made a point of showing that we the pointing out that we have a different user because you’re going to see roll level security at work here.

25:45

The data is going to look slightly different.

25:47

All right, So we have our sales query package or database selected and you can see the rest of the packages that we’ve configured and we’re going to just bring in the same table.

25:57

So we’re going to start off with our cells table here, then add our branch order method, products and time.

26:09

All right, great.

26:10

So now we have our model ready to go and we can start building our report.

26:15

So first let’s add that map that we created.

26:20

So all right, we have our the countries that we have data points for and let’s add believe it was revenue over here to the bubble size and let’s just format this That looks nice.

26:43

OK, so let’s choose a different country this time and do some validation.

26:48

Let me bring up my Cognos report here.

26:53

And let’s do, let’s do Australia’s right here at the top.

26:55

So we’re expecting 87,563,000.

27:03

Where’s Australia?

27:04

And you can see right there at the bottom right that that number matches with what we see in Congress.

27:11

Perfect.

27:12

So we can see that accurate data coming through in Tableau as well.

27:17

Let’s create that hierarchy that we made in Power BI that Tableau also supports it.

27:25

Just want to see how seamless.

27:26

I just want to show you guys how seamless it is with using the connector.

27:30

So we’ll leave us started with product line and let me make sure before this looks funny.

27:39

Yeah, product line and then product.

27:41

So we can go to product line, this little menu button and create a hierarchy, call this product hierarchy, and then we’re going to add our product type to that hierarchy and then our product.

28:08

Perfect.

28:09

So let’s drag in our product hierarchy here and you can see how it expands just having Power BI.

28:18

And then let’s add some information here.

28:20

We added revenue and, and revenue, perfect.

28:29

And, and actually I forgot to mention here, you see that these product lines aren’t the same.

28:35

Well, there’s some missing compared to the Power BI version.

28:41

And now, so in Power BI we’re using one user and Tableau we’re using another user.

28:45

So here there’s some data security built in where the Cognos Embed 2, which is the user in Tableau doesn’t have access to camping equipment or golf equipment.

28:56

So we can see that at play here.

28:58

And we didn’t have to do anything extra in Tableau.

29:01

We didn’t have to you know, do any extra configuration.

29:04

It just works because we’re using those credentials that we signed in with to query our Cognos data.

29:10

All right, let me there’s this here and I guess let me just format these so there are dollar figures and let’s make some let’s do some validation, right.

29:33

So here actually these aren’t going to line up all the way because of that filtering that we’re doing.

29:43

So let’s well, actually no, they still should actually.

29:48

So let’s do outdoor protection for planned revenue, outdoor protection.

29:53

So yeah, 80,005,200 and six $266.89.

29:58

So there you go.

30:00

The numbers are matching up and that is exactly what we want.

30:03

All right, now we just have one more visualization to create here and that’s our line chart.

30:09

So let’s add our.

30:13

We need time, we are here.

30:17

And then we need our quantity from cells.

30:22

If I can find that, here it goes.

30:30

And then we want to break it down by order method type.

30:36

So here let’s do some more validation and let’s see what we can compare.

30:44

Let’s do e-mail from 2010 just because it’s the top of the table here.

30:49

So let’s see what is e-mail.

30:55

Emails are blue line here from 2010, so 1,986,395 they match, which is perfect.

31:07

Alright, And then actually did not need to add a sheet.

31:12

Let’s add a dashboard and let’s bring all these in.

31:15

So our report looks like what we made in Power BI At this point.

31:22

This is more, you know, Tableau functionality, but I just want to show you guys that we can make the same report and then publish it up to Tableau Server.

31:38

And then we have our line chart here and perfect.

31:49

So it’s not the previous, but it has the data there, right?

31:53

And that’s what’s important.

31:55

And now what I want to show off here is publishing this.

32:00

So I’m going to publish up to my Tableau Server and the connector also works with a Tableau cloud, which is, you know, the software as a surface offering from Tableau.

32:11

If you do have that, then you would need to set up the Tableau bridge, which is kind of the same as the Power BI gateway.

32:18

And the connector works just fine with it.

32:20

There’s a there’s really no, no extra configuration that needs to be done there.

32:27

So while this is processing, cause it’s a couple seconds, we’ll give it a couple more seconds.

32:47

It was working a little bit faster earlier when I was trying this.

32:50

But I with demos, things always act funny.

32:55

Alright, here we go.

32:56

So let’s change this to demo.

32:59

And one thing I want to point out here is in the data sources, so you can actually embed the credentials that you used to build a report.

33:07

I’m going to actually going to select, I’m going to keep this prompt user option just because I want to run the same report in Tableau Server as our first user, the Cognos embed user, and show you guys how that pro level security just works.

33:23

So let’s publish this and once it’s available, right?

33:30

Let me maximize this.

33:33

All right, let’s open up our dashboard here.

33:40

Let’s get this a couple seconds to load today.

33:48

I don’t see a loading animation, so I’m just going to refresh this.

33:52

Hopefully that works a little faster.

34:03

Come on, Tableau, let me refresh one more time.

34:19

Oh no, there it is.

34:20

All right.

34:20

I just got to be patient.

34:21

All right, so here we see the Cognos embed 2 credentials, but I’m going to get rid of this too.

34:27

And I am in the login as the Cognos embed user and give me one second getting the password and let’s sign in.

34:40

OK, All right.

34:48

And here you see that we have all our product lines.

34:51

So again, roll level security just working right, which is something nice because I’m sure everyone spent a lot of time setting up those, those filters and framework manager and it’s great to see them over here on the Tableau and Power BI side.

35:10

So that concludes our demo and I’m going to go back to slide deck here.

35:16

Bear with me.

35:18

And I want to talk about return of investment using the connector.

35:23

So first and most importantly, you save time, right?

35:28

You’re not recreating metadata, you’re not spending time converting reports outside of Cognos, and you’re not having to maintain metadata layers between tools.

35:38

You just drag and drop the data and it’s working.

35:42

You’re also reducing your data security risk.

35:45

Any security measures you’ve baked to Cognos to get you into compliance, you know, with different standards such as TIPA or whatever maybe are still there and that gives you Peace of Mind.

35:56

And you’re also maximizing your Cognos investment.

35:58

Cognos is your source of truth and you’re still leveraging that truth.

36:03

Lastly, you ensure accurate and aligned business metrics.

36:06

Remember, no one needed to remodel your data.

36:09

You’re still using your tried and true Cognos models and everyone is going to be on the same page because of it.

36:17

So here I’ve reached the end of my part.

36:20

Steve, back to you.

36:22

All right, thank you, Arturo.

36:28

Quick for everybody, if you’re interested in doing a test drive of the Analytics Connector, we do offer a free 30 day trial.

36:36

So if you like what you saw here and you think this would be useful for your organization, do reach out to us.

36:41

That 30 day trial includes help with setting up the connector in your environment, usually driven by Arturo, and we’d offer you 30 days of support as you go through and explore the connector with either Tableau or Power BI.

36:57

You can either type that long URL that’s on the slide into your web browser, or you can just give us the senturus.com.

37:04

Click on the menus, go down to the analytics connector section, and you’ll find a link to sign up and request a free 30 day trial.

37:14

Beyond that one, just make you aware of some upcoming events and resources on our website.

37:21

So coming up on July 31st, we have AQ and A on data blending using analyzing Excel and composite models from Power BI.

37:30

Coming up on August 15th, we have a webinar on master data management, the risking data modernization, AI and fabric initiatives.

37:39

So check those out.

37:41

We also have an on demand webinar on moving from Cognos to Power BI.

37:45

So if you are looking at migrations, you can check out our on demand webinar that’s on our website at Senturus.com/resources.

37:54

We also have an enormous amount of other information available in the knowledge center there on our website.

38:01

We’ve got pods, we’ve got test webinars, tip sheets there.

38:05

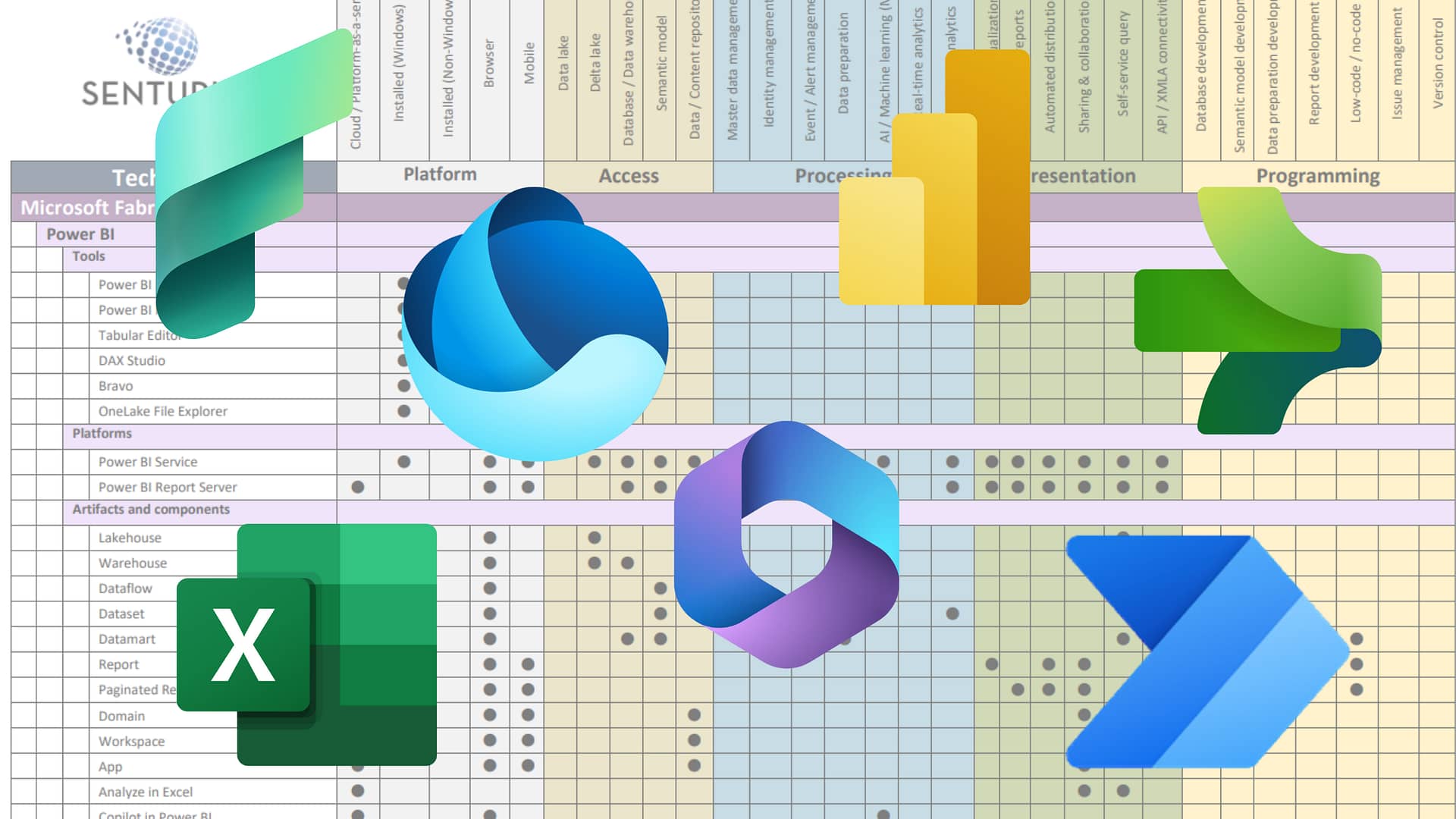

There’s a great matrix on feature sets in the Microsoft stack.

38:10

So just check all that out.

38:12

Senturus.com/resources.

38:17

And well, actually I’ve already talked about some of the additional resources.

38:20

So again, check out the resources page.

38:23

You’ll find a lot of useful details there, lots of valuable information.

38:29

A little bit about Senturus as a company, if you’re not already familiar with us, we are very focused on modern BI, helping you accelerate your BI initiatives, everything from vision and strategy all the way down to hands on development, really helping your users do stuff from the ground up.

38:50

So a whole, whole range of options that don’t hesitate to reach out to us for any of your BI needs.

38:57

We’ve been in this business for a long, long time.

39:00

Long strong history, 23 years in the BI world.

39:04

We started with Cognos.

39:06

As time has moved on and platforms have, you know, advanced and developed, we’ve expanded out.

39:13

We do, we still do a lot of Cognos.

39:14

So if you need Cognos help, we can be your go to for that.

39:19

We also focus a lot on the Microsoft stack nowadays.

39:24

Go ahead and jump to the very last slide or not the very last slide, but jump ahead a couple of slides there.

39:29

Arturo, we’re going to go ahead and go into Q&A.

39:33

There have been a few questions that came through the Q&A panel while Arturo was presenting.

39:39

So I’m some of those got answered, but in case you’re not tracking the Q&A panel completely, I’m just going to revisit a couple of them and I’ll just kind of feed you some questions here.

39:50

Arturo, one question we got earlier was Veru asked about whether auditing in Cognos also tracks the activity coming from Tableau or Power BI with the connector.

40:04

And Bob gave a short answer already, which is that yes, you know, auditing does show up.

40:09

But Artur, could you maybe talk a little bit about how and why the activities of the connector show up in the Cognos audit logs?

40:18

Yeah, yeah, yeah.

40:19

So, yeah, short answer, yes, it is supported what it is tracked.

40:23

And main reason why that works is because when you log in to either Power BI or Tableau, you you’re using your Cognos credentials, right, your Cognos user.

40:34

And then what the connector does behind the scenes is it queries your Cognos environment using the Cognos SDK.

40:42

So everything that you do is tracked just how activity in cognitive analytics would be cool.

40:52

And so in the logs worker like it won’t say this came from the analytics connector, right?

40:57

Because the way that we send the query into cognitive, it would show up.

41:02

It just does that user running a report, right?

41:05

Exactly.

41:05

Yep.

41:08

Cool.

41:09

I’m going to go to some of the unanswered questions 1st and then I may drop it back into some of these that Bob put some details into the Q&A already.

41:18

So we’ve got a question.

41:19

Does the user need both the Tableau license and a Cognos license in order to use the connector?

41:27

Yeah, you would need the answer to that is yes, right?

41:30

Yeah, because so this this question came from Chris.

41:33

So Chris, on the Cognos side, you have to have a Cognos license, right?

41:37

Because what we’re doing is we’re sending, we’re basically formulating, you know, dynamically creating a Cognos report on the fly to pull the data.

41:45

And so that requires a Cognos license in order to execute in Cognos.

41:49

And of course on Tableau, and this is also true in Power BI, you need to obviously have licensing that’s applicable for the front end tool to run.

42:05

What else do we have here?

42:06

Chris also asks about a road map for additional BI tools such as Clinic, SAS, Alteryx.

42:12

It looks like Bob is typing an answer.

42:13

Actually, Bob, I don’t know if you’re, you’ve got your audio ready, maybe you could just answer this.

42:17

I’ll put you on the spot.

42:19

Sure.

42:19

Happy to in real time.

42:22

Yeah, I think the short answer is a road map for adding a new BI tool like that would be driven by customer demand.

42:28

So if you know anyone out there has a significant kind of use case and you know it, it would you would find something like that valuable, feel free to reach out.

42:38

But it would be a, you know, a decent investment both time and money on our side to, to implement another BI tool.

42:50

Thanks, Bob.

42:51

Also, while I’m thinking of it, just to call everybody’s attention to it, if you do have further questions about the connector, you can reach out to Kay Knowles who has been posting in the chat window.

43:02

So just take note, there’s a link there if you’d like to get some time on Kay’s calendar to talk about the connector or other support services related to Cognos, Power BI, Tableau or migrations, you can reach out to Kay.

43:19

All right, other questions here from Chris.

43:22

Oh, Arturo’s typing it out.

43:23

So I’m going to call you out also, Arturo.

43:25

So Chris is asking if something changes in the framework model in the package from FM, does that same change need to be made in the connector for Tableau as well?

43:36

If you take that away are true?

43:39

Yes.

43:39

So the answer is no.

43:42

All the data is coming in from Cognos.

43:44

So whatever changes were made, Tableau will reflect them and the connector will automatically refresh.

43:56

Is that right?

43:57

Or try like it will detect.

43:58

So if you republish a package in Cognos, the connector automatically detects that that’s happened and picks up the package changes without any intervention the user’s part.

44:08

Yep, exactly.

44:09

It’ll pick up any changes you make to your model and yeah, you’ll see those reflected in your in your tool choice.

44:17

Great.

44:19

All right, so I’m going to drop into some of the answered questions here.

44:24

So there was a question about refreshing the data in the Power BI service.

44:28

I’m just going to call you out again, Bob, you already answered it, but you can probably just kind of encapsulate it other the differences between direct query and how that works with refreshing data once things are published in the service.

44:43

Sure.

44:43

Yeah.

44:44

So I think that the bottom line is that the connector’s going to work like any other Power BI data source, that you’ve published a Power BI service.

44:52

And so if the model is in direct query mode, meaning all the queries that you’re running that the users are running when they’re getting reports are getting passed back real time, that’s going to go through a Power BI gateway and then back to your Cognos system that queries run and then that data returns to Power BI service to display to the user.

45:14

So there is no real refresh at that point in time.

45:16

The data is just always kind of live or, you know, it’s just sending live queries.

45:20

And the other use case would be if, if maybe you set up, you know, part of a model or maybe a Cognos report, cause we support running Cognos reports and you use that as a extracted data source.

45:35

You can set that up in Power BI service just like any other Power BI semantic model to have a refresh, refresh schedule to go kick off manual refreshes.

45:46

But it from a data pathway, it’s doing the same thing.

45:48

It’s going to have to go through a Power BI gateway to get back to your cognitive system to grab that data.

45:53

And then that data is getting stored temporarily in Power BI service as part of that semantic model.

46:03

Great.

46:03

Thank you, Bob.

46:04

I’m going to hit you up again for just one more question, Bob, which you already answered, but it’s a, it’s a pretty technical kind of detail piece.

46:12

So I’m just going to put it out to here.

46:14

Chris asked whether the connector brings forward the joins and the cardinality that are in the package, just bring those forward for Tableau, but not for Power BI.

46:23

Was this Sure.

46:24

Yeah.

46:24

And this is it.

46:26

It is a confusing point because there’s the joins that the connector’s going to leverage are the joins in in the Cognos model.

46:33

But the BI tools have to think of these tables as being related to one another to send the correct queries.

46:40

Otherwise they would send queries thinking of the tables being independent from each other.

46:46

So the only purpose of that 888 link is just to say, hey, these tables are related to one another and under the covers as it goes through the translation layers and get sent to Cognos.

47:00

Those joins are eventually ignored more or less in Favor of the Cognos relationships and Tableau is automatically able to figure out those linkages whereas we have to do it manually.

47:13

And exactly it’s that manual exercise of dragging 888 link to 888 link that Archer was demonstrating.

47:21

You just don’t have to do that in Tableau because when it sees same named columns in two tables, it just assumes that they’re connected.

47:31

Cool.

47:32

All right, there’s just one more question which is very simple and it’s been answered, but I’m going to answer it before we wrap up.

47:39

This is an easy one, which is, does row level security also apply to Power BI?

47:43

Of course, Arturo did his demo of row level security with Tableau.

47:47

The answer is yes, row level security.

47:49

And you know, it happens to be in Tableau that Arturo was doing that demo, but the same connector code and logic runs under the hood regardless of what your front end tool is.

48:00

So reliable security gets applied based on the rules in your Cognos package and then that data flows out to whatever front end tool you’re using.

48:13

And with that, that’s the end of our incoming questions.

48:18

Oh, actually there is one last question.

48:19

Brad, just put a question there about would it be the same process for a package and a data module?

48:27

So can you maybe speak to that, Arthur, if there’s any differences with sourcing data from a data module versus the package?

48:35

Yeah, no difference.

48:37

They you would interact with them just the same.

48:44

Cool.

48:44

All right.

48:45

Well, with that, I think you can go on to our final slide there, Arturo.

48:49

And thank you everybody for attending today.

48:52

It’s always great to have you here and we do hope to see you at a future Senturus webinar.

48:58

I’m Arturo.

48:59

Thanks for your presentation today.

49:01

Great demos, great Q&A session there.

49:05

And with that, I think we can wrap up.

49:07

So again, thank you everybody and have a great rest of the day.